Sapienta Machina: on an instrumented relationship between humans and robots

•

5 j'aime•1,141 vues

Talk given at Next 12 Berlin on May 8th 2012.

Signaler

Partager

Signaler

Partager

Recommandé

Recommandé

Talk given at an internet of things meetup in Antwerp on December 4th 2014.What was the question again? A nostalgic look back at "The Year of the Intern...

What was the question again? A nostalgic look back at "The Year of the Intern...Alexandra Deschamps-Sonsino

Talk given at Thingmonk 2013, on December 3rd 2013. Inflexion points: the past, present and future of the internet of things.

Inflexion points: the past, present and future of the internet of things. Alexandra Deschamps-Sonsino

Talk given at the Dots conference on September 3rd aand Nordic Digital Business Summit 2014 in Helsinki on September 4th 2014. Damned if you do, Damned if you don't: commerce and the internet of things

Damned if you do, Damned if you don't: commerce and the internet of thingsAlexandra Deschamps-Sonsino

Contenu connexe

Tendances

Tendances (12)

Artificial Intelligence:Will Robots replace human being in the near Future

Artificial Intelligence:Will Robots replace human being in the near Future

En vedette

Talk given at an internet of things meetup in Antwerp on December 4th 2014.What was the question again? A nostalgic look back at "The Year of the Intern...

What was the question again? A nostalgic look back at "The Year of the Intern...Alexandra Deschamps-Sonsino

Talk given at Thingmonk 2013, on December 3rd 2013. Inflexion points: the past, present and future of the internet of things.

Inflexion points: the past, present and future of the internet of things. Alexandra Deschamps-Sonsino

Talk given at the Dots conference on September 3rd aand Nordic Digital Business Summit 2014 in Helsinki on September 4th 2014. Damned if you do, Damned if you don't: commerce and the internet of things

Damned if you do, Damned if you don't: commerce and the internet of thingsAlexandra Deschamps-Sonsino

Talk given at JAX 2014 in Mainz, Germany on May 14th 2014. Go out and play: why software isn't what the internet of things needs the most.

Go out and play: why software isn't what the internet of things needs the most.Alexandra Deschamps-Sonsino

Talk given on October 31st at Cofa in Sydney. http://www.cofa.unsw.edu.au/events/archive/964Recalculating: how the internet of things presents new challenges for design.

Recalculating: how the internet of things presents new challenges for design. Alexandra Deschamps-Sonsino

Talk given at the BLN IOT Forum on March 11th 2015.Coming out of the coalmine: the grown up challenges of the internet of things.

Coming out of the coalmine: the grown up challenges of the internet of things.Alexandra Deschamps-Sonsino

Talk given at Web Directions South 2013 in Sydney on Friday October 25th 2013. Recalculating: how we design the internet of things from the user up and the ...

Recalculating: how we design the internet of things from the user up and the ...Alexandra Deschamps-Sonsino

En vedette (20)

What was the question again? A nostalgic look back at "The Year of the Intern...

What was the question again? A nostalgic look back at "The Year of the Intern...

Inflexion points: the past, present and future of the internet of things.

Inflexion points: the past, present and future of the internet of things.

Damned if you do, Damned if you don't: commerce and the internet of things

Damned if you do, Damned if you don't: commerce and the internet of things

Soft not slow: Defining a design process for the internet of things

Soft not slow: Defining a design process for the internet of things

Go out and play: why software isn't what the internet of things needs the most.

Go out and play: why software isn't what the internet of things needs the most.

Recalculating: how the internet of things presents new challenges for design.

Recalculating: how the internet of things presents new challenges for design.

Coming out of the coalmine: the grown up challenges of the internet of things.

Coming out of the coalmine: the grown up challenges of the internet of things.

Recalculating: how we design the internet of things from the user up and the ...

Recalculating: how we design the internet of things from the user up and the ...

Similaire à Sapienta Machina: on an instrumented relationship between humans and robots

Similaire à Sapienta Machina: on an instrumented relationship between humans and robots (20)

Artificial intelligence and concept of machine learning

Artificial intelligence and concept of machine learning

MINI-PRESENTATION DONE BY FATOUMA ALI ISSE.PPT.pptx

MINI-PRESENTATION DONE BY FATOUMA ALI ISSE.PPT.pptx

Social Effects by the Singularity -Pre-Singularity Era-

Social Effects by the Singularity -Pre-Singularity Era-

Introduction to Artificial Intelligence: AIM tinkering Lab Unit 1

Introduction to Artificial Intelligence: AIM tinkering Lab Unit 1

Plus de Alexandra Deschamps-Sonsino

Talk given at the Risk and Security Management Forum, February 11th 2020 in London. Death by a thousand cuts: information fatigue in the smart cities of tomorrow.

Death by a thousand cuts: information fatigue in the smart cities of tomorrow. Alexandra Deschamps-Sonsino

Plus de Alexandra Deschamps-Sonsino (20)

Repairable by design? (January 2022 meeting notes)

Repairable by design? (January 2022 meeting notes)

Ideas to reframe your corporate innovation activities

Ideas to reframe your corporate innovation activities

Good for who? Understanding the challenges of implementing good design

Good for who? Understanding the challenges of implementing good design

Good for who? Understanding the challenges of implementing good design

Good for who? Understanding the challenges of implementing good design

Invisible colleges: wealth, friends and fame in art and design.

Invisible colleges: wealth, friends and fame in art and design.

Death by a thousand cuts: information fatigue in the smart cities of tomorrow.

Death by a thousand cuts: information fatigue in the smart cities of tomorrow.

Dernier

Mtp kit in kuwait௹+918133066128....) @abortion pills for sale in Kuwait City ✒Abortion CLINIC In Kuwait ?Kuwait pills +918133066128௵) safe Abortion Pills for sale in Salmiya, Kuwait city,Farwaniya-cytotec pills for sale in Kuwait city. Kuwait pills +918133066128WHERE I CAN BUY ABORTION PILLS IN KUWAIT, CYTOTEC 200MG PILLS AVAILABLE IN KUWAIT, MIFEPRISTONE & MISOPROSTOL MTP KIT FOR SALE IN KUWAIT. Whatsapp:+Abortion Pills For Sale In Mahboula-abortion pills in Mahboula-abortion pills in Kuwait City- .Kuwait pills +918133066128)))abortion pills for sale in Mahboula …Mtp Kit On Sale Kuwait pills +918133066128mifepristone Tablets available in Kuwait?Zahra Kuwait pills +918133066128Buy Abortion Pills Cytotec Misoprostol 200mcg Pills Brances and now offering services in Sharjah, Abu Dhabi, Dubai, **))))Abortion Pills For Sale In Ras Al-Khaimah(((online Cytotec Available In Al Madam))) Cytotec Available In muscat, Cytotec 200 Mcg In Zayed City, hatta,Cytotec Pills௵+ __}Kuwait pills +918133066128}— ABORTION IN UAE (DUBAI, SHARJAH, AJMAN, UMM AL QUWAIN, ...UAE-ABORTION PILLS AVAILABLE IN DUBAI/ABUDHABI-where can i buy abortion pillsCytotec Pills௵+ __}Kuwait pills +918133066128}}}/Where can I buy abortion pills in KUWAIT , KUWAIT CITY, HAWALLY, KUWAIT, AL JAHRA, MANGAF , AHMADI, FAHAHEEL, In KUWAIT ... pills for sale in dubai mall and where anyone can buy abortion pills in Abu Dhabi, Dubai, Sharjah, Ajman, Umm Al Quwain, Ras Al Khaimah ... Abortion pills in Dubai, Abu Dhabi, Sharjah, Ajman, Fujairah, Ras Al Khaimah, Umm Al Quwain…Buy Mifepristone and Misoprostol Cytotec , Mtp KitABORTION PILLS _ABORTION PILLS FOR SALE IN ABU DHABI, DUBAI, AJMAN, FUJUIRAH, RAS AL KHAIMAH, SHARJAH & UMM AL QUWAIN, UAE ❤ Medical Abortion pills in ... ABU DHABI, ABORTION PILLS FOR SALE ----- Dubai, Sharjah, Abu dhabi, Ajman, Alain, Fujairah, Ras Al Khaimah FUJAIRAH, AL AIN, RAS AL KHAIMAMedical Abortion pills in Dubai, Abu Dhabi, Sharjah, Al Ain, Ajman, RAK City, Ras Al Khaimah, Fujairah, Dubai, Qatar, Bahrain, Saudi Arabia, Oman, ...Where I Can Buy Abortion Pills In Al ain where can i buy abortion pills in #Dubai, Exclusive Abortion pills for sale in Dubai ... Abortion Pills For Sale In Rak City, in Doha, Kuwait.௵ Kuwait pills +918133066128₩ Abortion Pills For Sale In Doha, Kuwait,CYTOTEC PILLS AVAILABLE Abortion in Doha, ꧁ @ ꧂ ☆ Abortion Pills For Sale In Ivory park,Rabie Ridge,Phomolong. ] Abortion Pills For Sale In Ivory Park, Abortion Pills+918133066128In Ivory Park, Abortion Clinic In Ivory Park,Termination Pills In Ivory Park,. *)][(Abortion Pills For Sale In Tembisa Winnie Mandela Ivory Park Ebony Park Esangweni Oakmoor Swazi Inn Whats'app...In Ra al Khaimah,safe termination pills for sale in Ras Al Khaimah. | Dubai.. @Kuwait pills +918133066128Abortion Pills For Sale In KuwaAbortion Pills in Oman (+918133066128) Cytotec clinic buy Oman Muscat

Abortion Pills in Oman (+918133066128) Cytotec clinic buy Oman MuscatAbortion pills in Kuwait Cytotec pills in Kuwait

Dernier (20)

Abortion Pills in Oman (+918133066128) Cytotec clinic buy Oman Muscat

Abortion Pills in Oman (+918133066128) Cytotec clinic buy Oman Muscat

How to Create a Productive Workspace Trends and Tips.pdf

How to Create a Productive Workspace Trends and Tips.pdf

Anupama Kundoo Cost Effective detailed ppt with plans and elevations with det...

Anupama Kundoo Cost Effective detailed ppt with plans and elevations with det...

Abortion pills in Riyadh +966572737505 <> buy cytotec <> unwanted kit Saudi A...

Abortion pills in Riyadh +966572737505 <> buy cytotec <> unwanted kit Saudi A...

NO1 Top Pakistani Amil Baba Real Amil baba In Pakistan Najoomi Baba in Pakist...

NO1 Top Pakistani Amil Baba Real Amil baba In Pakistan Najoomi Baba in Pakist...

Madhyamgram \ (Genuine) Escort Service Kolkata | Service-oriented sexy call g...

Madhyamgram \ (Genuine) Escort Service Kolkata | Service-oriented sexy call g...

UI:UX Design and Empowerment Strategies for Underprivileged Transgender Indiv...

UI:UX Design and Empowerment Strategies for Underprivileged Transgender Indiv...

Top profile Call Girls In Sonipat [ 7014168258 ] Call Me For Genuine Models W...![Top profile Call Girls In Sonipat [ 7014168258 ] Call Me For Genuine Models W...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Top profile Call Girls In Sonipat [ 7014168258 ] Call Me For Genuine Models W...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Top profile Call Girls In Sonipat [ 7014168258 ] Call Me For Genuine Models W...

How to Turn a Picture Into a Line Drawing in Photoshop

How to Turn a Picture Into a Line Drawing in Photoshop

Just Call Vip call girls Kasganj Escorts ☎️8617370543 Two shot with one girl ...

Just Call Vip call girls Kasganj Escorts ☎️8617370543 Two shot with one girl ...

Sapienta Machina: on an instrumented relationship between humans and robots

- 3. LIREC is a 5 year EU funded project that explored the implications of living with interactive and robotic companions. www.lirec.eu

- 4. 2 things. 3.What we are doing with robots now. •What we are doing to ourselves

- 7. A robot is a mechanical or virtual intelligent agent that can perform tasks automatically or with guidance.

- 8. Humans have a highly developed brain and are capable of abstract reasoning, language, introspection, problem solving, self-awareness, rationality, and sapience.

- 9. Robots & humans share a form of intelligence because it’s the easiest thing to replicate.

- 10. Robots now. •Understand people •Understand context •Grow old gracefully •Learn to forget •Adapt

- 15. “If the sum of positive emotions is bigger than the sum of the negative emotions over the last n time periods then the agent is in good mood or is in bad mood.”

- 16. Meet EMYS. LIREC EMYS / FLASH robot

- 17. Smile for the robot.

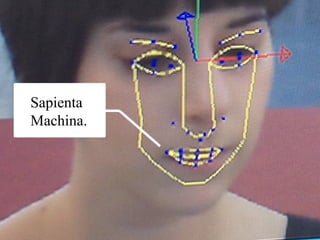

- 18. This is what the robot sees.

- 19. People now.

- 23. So what.

- 25. Timo Arnall: Robot Readable World

- 26. Internet Fridge Factor: Great idea & Terrible execution

- 27. Pasta & Vinegar blog

- 28. & Cebit QR codes in the wild

- 30. Robots are a tool for self-reflection.

- 31. Humans have a highly developed brain and are capable of abstract reasoning, language, introspection, problem solving, self-awareness, rationality, and sapience.

- 32. Sapience wisdom, or the ability of an organism or entity to act with appropriate judgment.

- 34. We have to decide who we are designing for, us or them. Not that it matters.

Notes de l'éditeur

- Evolution of an instrumented relationship.

- Hi. I’m Alex, and I’m a designer. Right now I wear 3 hats. One running an internet of things design consultancy called Designswarm, the second founder of a startup called the Good Night Lamp and the third as part of a design partnership RIG.

- One of the areas I was involved in for the past 2 years is robotics through a project called LIREC. This 5 year project finishing this

- (http://vimeo.com/10799224) The work of Paul Ekman in creating the industry standard of Facial Action Coding System ( http://en.wikipedia.org/wiki/Facial_Action_Coding_System ) becomes useful to design the instantiation of our emotions, into physical facial reactions. It is a common standard to systematically categorize the physical expression of emotions, and it has proven useful to psychologists and to animators. Ekman showed that facial expressions of emotion are not culturally determined, but universal across human cultures and thus biological in origin . Expressions he found to be universal included those indicating anger, disgust, fear, joy, sadness, and surprise.

- This is how phonemes ( the smallest segmental unit of sound employed to form meaningful contrasts between utterances) are divided up by animators. It’s hard to think about speech without thinking of the whole face though and the emotions that are communicated at the same time. Only text to speech bots really manage to sever the 2 which is also why they feel so robotic.

- Little Mozart, a product of the industrial partnership at Lirec is what is called an Affective Tutoring System Sometimes emotions play a positive role in reasoning process, other times they don't but one thing is sure emotion influence the reasoning process and also influence the learning capabilities. Emotions like anxiety, depression or hungriness may reduce or even block the learning so, like teachers already know, emotional upsets infer in learning capabilities. Some teachers adapt their behaviour to, within their possibilities, improve students learning capabilities. Little Mozart tries to do the same. The robotic scenario had a more immersive user experience, an improved feedback and a more believable social interaction. Little Mozart helps children compose music and improve their knowledge of melodic composition and basics of musical language. It's intended for children ages between 4 and 10 years old.

- However, for many laymen, if a machine appears to be able to control its arms or limbs, and especially if it appears anthropomorphic or zoomorphic (e.g. ASIMO or Aibo), it would be called a robot. http://en.wikipedia.org/wiki/List_of_fictional_robots_and_androids#1970s If you’re of a particular disposition you will see robots everywhere in nature and objects around you. These are pictures taken without permission from the photo sharing site Flickr’s “Hello Little Fella” group where people have been aggregating photos of everyday objects that look like faces for years now.

- http://www.ubergizmo.com/2007/04/human-player-toy-psychoanalyzes-you/

- http://vimeo.com/36239715

- http://nearfuturelaboratory.com/pasta-and-vinegar/2006/09/08/visual-marker-recognition/

- http://wireless.3yen.com/2005-04-22/qr-codes-are-so-2004-get-ready-for-colorcode/

- Robots are a useful way for humans to understand themselves, others and what place technology has in building relationships.