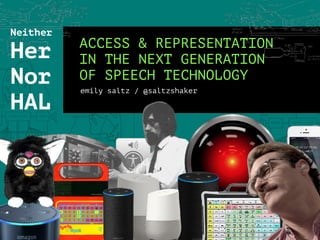

Neither Her Nor Hal: Considering Access and Representation in the Next Generation of Speech Technology

- 1. ACCESS & REPRESENTATION IN THE NEXT GENERATION OF SPEECH TECHNOLOGY emily saltz / @saltzshaker Neither Her Nor HAL

- 2. a little about me!

- 3. Session Goals 1. Examine POPULAR MEDIA NARRATIVES around smart home devices and feelings of CREEPINESS about synthesized speech. 2. Learn about ACCESSIBILITY USE CASES for synthesized voice output 3. Consider how IDENTITY is encoded in SOUND PATTERNS of speech. Think about how to parameterize those patterns 4. Learn what’s possible with CURRENT TECH like the Web Speech API & p5.speech. Explore future use cases for SONIC EXPERIMENTATION - aka computers aren’t limited to the constraints of the human vocal tract!

- 4. 1. What popular narratives exist about speech tech? (e.g. Alexa/Siri/Google Assistant as creepy, sexist AI)

- 7. Not OK!!! BUT. Let’s wait before we throw out every voice interface baby with Alexa’s creepy bathwater…

- 8. 2. What are other use cases for synthesized speech output? How is it used by people with disabilities?

- 9. How a new technology is changing the lives of people who cannot speak –Jordan Kisner in The Guardian, 2019

- 10. Sara Young, recipient of “bespoke” digital voice from VoiceID Image from https://twitter.com/LindsayMoran/status/679302950712422400 How a new technology is changing the lives of people who cannot speak –Jordan Kisner in The Guardian, 2019

- 11. Voice Banking

- 13. –Roger Ebert, Remaking my voice (TED2011) “You all know the test for artificial intelligence – the Turing test. A human judge has a conversation with a human and a computer. If the judge can’t tell the machine apart from the human, the machine has passed the test. I now propose a test for computer voices – the Ebert test. If a computer voice can successfully tell a joke and do the timing and delivery as well as Henny Youngman, then that’s the voice I want.”

- 14. Speech output as a tool for non-visual access Screen reader demo with Sina Bahram! https://www.youtube.com/watch?v=92pM6hJG6Wo

- 16. “While synthetic speech may be difficult to comprehend at first, its tolerance and comprehension increases with more exposure and experience. …while most people would prefer a natural voice, they find synthetic voices acceptable for a number of applications… When asked if they would like to choose their own synthetic voice [95%] said “yes”… [and some] suggested one of the voices they use most often.” – Text-to-speech audio description: towards wider availability of AD Agnieszka Szarkowska, University of Warsaw (2011)

- 17. 3. What information is encoded in speech? What kinds of sound patterns encode that information?

- 18. Listening Time! Jot down anything you notice about the speech sounds of these voices. Pay attention to how they interact and change. • Hey, youngblood. Lemme give you a tip. Use your White voice. • Man, I ain’t got no White voice. • Oh come on. You know what I mean. You have a White voice in there. You can use it! It’s like when being pulled over by the police. • Oh no, I just use my regular voice when that happens. I just say: • “Back the f*ck up off the car and don’t nobody get hurt!” • Aight. I’m just tryna give you some game. You wanna make money here? Then read your script with a White voice. From “Sorry to Bother You” (2018)

- 19. Let’s hear that clip again This time read by the closest available synthesized speech voices. What do you notice now? How are the clips different?

- 20. Computers are Social Actors (CASA Theory) Synthesized speech “mindlessly” triggers social scripts like… • Gender stereotyping: e.g. Female- voiced computers rated more informative about love, Male-voiced computers rated more proficient in technical subjects • Reciprocity: When a computer voice “helps” you, you’ll do more work for it • Personality personification: Take language cues to interpret personality. Users like computers that match their personality (similarity attraction) https://en.wikipedia.org/wiki/Computers_are_social_actors Clifford Nass, Jonathan Steuer, and Ellen R. Tauber. Computers are social actors. (1994, Stanford)

- 21. Weinberger, Steven. (2015). Speech Accent Archive. George Mason University. http://accent.gmu.edu http://accent.gmu.edu/searchsaa.php?function=detail&speakerid=107

- 22. Weinberger, Steven. (2015). Speech Accent Archive. George Mason University. http://accent.gmu.edu http://accent.gmu.edu/searchsaa.php?function=detail&speakerid=571

- 23. The Web Speech voices available today Umm…only one way to speak English as a native US speaker? Even when systems have more, all/almost all are in some flavor of “Standard American” accent. Google Chrome Web Speech API demo

- 24. What is the “Standard American” (aka “General American English”) Accent? • White. Very white. • From the Northeastern United States in the very early twentieth century • Associated with wealthy highly- educated White Anglo-Saxon Protestant suburban communities • Characterized by the absence of "marked" pronunciation features of regional origin, ethnicity, or low socioeconomic status. https://en.wikipedia.org/wiki/General_American Stock image of a newscaster

- 25. What are the effects of a limited speech palette? • What do these voices imply about “correct” and “incorrect” ways of speaking? • How does the use of a Standard American English voice frame your relationship to it, and the tone of your response? • Is the voice a peer, superior, or subservient? • Think about how you speak differently to family vs friends vs strangers. Is a “conversation” with a computer incapable of adapting its speech style even a conversation?

- 27. “Segmental” properties of speech Fancy word for patterns of pronunciation of vowels & consonants. Examples: • The pronunciation of the most atomic units of sound in a language • e.g. “cot” vs “caught,” “pen” vs “pin” • “Butter” vs “Tammy”

- 28. “Suprasegmental” parameters (aka prosodic) Fancy word for patterns that extend over syllables, words, and phrases. Part of the grammar of a language. Examples: • Syllable structure • Intonation (melodic pattern) mapped to different syntaxes, e.g. questions • Stress (increase of volume and duration within a word) • Tone (variation in pitch)

- 29. “Paralinguistic” properties of speech Fancy word for miscellaneous speech stuff that’s harder to codify and varies by speaker. Examples: • Position of sound in a space • “Organic” vocal qualities - ie physiological, size/proportion of speech organs • Expressive vocal qualities - emotional tone expressed through variations in loudness, rate, pitch, pitch contour • Filler words like “um,” “uh,” “like” • “Backchanneling” - e.g. “mhm” in response to another speaker • Respirations: Breath, sighing, throat- clearing https://en.wikipedia.org/wiki/Paralanguage#Aspects_of_the_speech_signal

- 30. Speech Synthesis Markup Language (SSML) • Markup for prosodic features like pitch, contour, pitch range, rate, duration, volume • Often embedded within VoiceXML, the format used by telephony systems • Can add custom pronunciations • Used by vendors like Amazon for Alexa, Cortana • Supposedly compatible with the Web Speech API (though not explored much, yet!)

- 31. Web Speech API • https://github.com/mdn/web- speech-api/ • Speech recognition and speech synthesis • Basic parameters for changing rate, pitch, and voice • Browser support on Chrome • Available in Processing through the speech.p5!

- 32. Coding Rainbow demo: https://www.youtube.com/watch?v=v0CHV33wDsI&t=34 p5.js-speech repo: http://ability.nyu.edu/p5.js-speech/ p5.speech created for p5.js. written by R. Luke DuBois The ABILITY lab New York University p5.speech is a JavaScript library that provides simple, clear access to the Web Speech and Speech Recognition APIs, allowing for the easy creation of sketches that can talk and listen.

- 33. 4. When speech is software, does it even need to sound human? What are other interesting sonic possibilities?

- 35. The Art of Noises by Luigi Russolo (1913) Six Families of Noises for the Futurist Orchestra 1. Roars, Thunderings, Explosions, Hissing roars, Bangs, Booms 2.Whistling, Hissing, Puffing 3.Whispers, Murmurs, Mumbling, Muttering, Gurgling 4.Screeching, Creaking, Rustling, Buzzing, Crackling, Scraping 5.Noises obtained by beating on metals, woods, skins, stones, pottery, etc. 6.Voices of animals and people, Shouts, Screams, Shrieks, Wails, Hoots, Howls, Death rattles, Sobs Instruments for futuristic music, called "Bruitism", partly electrically operated, built by Russolo, 1913

- 36. – The Art of Noises by Luigi Russolo (1913) “[With machines] the variety of noises is infinite…not merely in a simply imitative way, but to combine them according to our imagination.”

- 37. Some more ideas for WeIrD SPeEcH SyNtHeSiS!TM* • A bunch of speech synthesizers walk into a bar / speech synthesizer cocktail party • Drunk speech synthesizer • Speech synthesizers loudly chewing, clearing their “throats” in an elevator • Baby talk speech synthesizer • Speech synthesizer with a speech impediment • Code-switching speech synthesizer that changes pronunciations throughout speech • SloMo Neil DeGrasse Tyson speech synthesizer • Shy, socially anxious speech synthesizer • Speech synthesizer which makes psycholinguistically realistic speech errors (e.g. spoonerisms like “queer old dean” / “dear old queen”) • Things that are physiologically impossible to articulate for a human! * Not actually a trademark. (YET.)

- 38. NOW Let’s design some voices! SUS examples http://research.nii.ac.jp/src/en/NITECH-EN.html SUS generator https://github.com/ecooper7/SUSgen/blob/master/susgen.py

- 39. Thank you! Thanks to the Processing Community! Contact: Emily Saltz, @saltzshaker / essaltz@gmail.com

- 40. Appendix

- 41. Technical Resources Programming voices •Web Speech API: https://developer.mozilla.org/en-US/docs/Web/API/Web_Speech_API •p5.Speech: http://ability.nyu.edu/p5.js-speech/ •Coding Rainbow demo: https://www.youtube.com/watch?v=v0CHV33wDsI&t=34s •Accessibility with Claire Kearney-Volpe and Chancey Fleet: https://www.youtube.com/ watch?v=8w_S17dZ2Do&t=684s •Speech Accent Archive: http://accent.gmu.edu/howto.php# •Accessibility: A Guide to Building Future User Interfaces - Jeff Bigham @ CMU, includes tutorials on building on a screen reader) http://accessibilitycourse.com/ •Semantically Unpredictable Sentences Generator: https://github.com/ecooper7/SUSgen/ blob/master/susgen.py •Speech Synthesis Markup •for Alexa: https://developer.amazon.com/docs/custom-skills/speech-synthesis-markup- language-ssml-reference.html •for Google: https://developers.google.com/actions/reference/ssml

- 44. Natural (humanoid) vs. Intelligible* not at all humanoid indistinguishable from a human not at all intelligible fully intelligible *DON’T FORGET TO ASK YOURSELF: “INTELLIGIBLE” TO WHO?

- 45. – Mariana Lin, Absurdist Dialogues with Siri (Paris Review) “My fear in AI design is not the singularity of global domination (media, stand down … I think we’re far from that); it’s the singularity of conversational domination. I don’t want AI to reduce speech to function, to drive turn-by-turn dialogue doggedly toward a specific destination in the geography of our minds.”