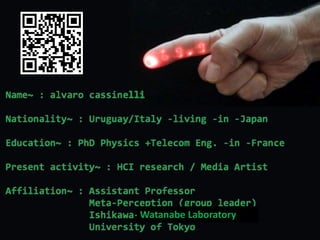

Alvaro Cassinelli / Meta Perception Group leader

- 2. Ishikawa-Watanabe lab: meta perception group By Alvaro Cassinelli, Assistant Professor (Meta Perception Group Leader)

- 4. I. The physical cloud (tangible gigabytes) • Space and objects as scaffold for data • Proprioceptive interfaces / Deformable interfaces • Multi-Modal Augmented Reality Latest research areas: II. Enabling technologies (sensing/projection, I/O) • Zero-delay, zero-mismatch spatial AR • Minimal, ubiquitous & context aware interactive displays • Laser displays, pneumatic slow displays, roboptics III. Mediated Self / Augmented Perception /Prosthetics • Augmented sensing & expression • Electronic travel aids, Wearables how to interact with the digital world? “enabling”… new realities Integrate technology and human life art research

- 5. I. The Physical cloud: data haunted reality I. The physical cloud (tangible gigabytes) • Space and objects as scaffold for data • Proprioceptive interfaces / Deformable interfaces • Multi-Modal Augmented Reality II. Enabling technologies (sensing/projection, I/O) • Zero-delay, zero-mismatch spatial AR • Minimal, ubiquitous & context aware interactive displays • Laser displays, pneumatic slow displays, roboptics III. Mediated Self / Augmented Perception /Prosthetics • Augmented sensing & expression • Electronic travel aids, Wearables

- 6. (b) Data on Objects (objects as intuitive handles or “icons” : beyond “PUI”) (a) Data on 3D space Experiments on psychology – space perception and organization • The body as a “bookshelf” or body mnemonics(*) • Shared “Memory Palace” (interpersonal spatialized database) • The city, public spaces, etc. as a 3d “bookshelf” Experiments on psychology • background picture as organizational scaffold for files and folders (Takashita-kun) • Association by perceived affordances (Invoked computing) How to interact with “just coordinates” in space? • Propioceptive interfaces (VSD, Krhonos, Virtual HR) • Deformable workspace metahor Spidar Screen • Virtual BookShelf (Takashita-san) • Real time projection mapping • twitterBrain (Philippe, Jordi) How to interact with objects that do not have I/O interfaces? • Invoked Computing / function projector • Objects with “memory” of action (thermal camera) • On the flight I/O interfaces (LSD technology) Research Concept Enabling Technology (*) Jussi Angesleva, 2004 Memory Blocks (2011~) Laser Sensing Display (create the sense of “presence” / mixed reality) “Physical Cloud”?

- 7. (a) Interacting with “floating” data

- 8. … the Interaction Metaphor

- 9. KHRONOS PROJECTOR [2005] • Deformable screen / fixed in space • Screen as a controller

- 10. KHRONOS PROJECTOR (media arts, 2004), video excerpt • Fixed screen / deformable (passive haptic feedback) • Screen as a membrane between real and virtual

- 11. Precise control possible [2008] …a physical attribute makes manipulation more precise, even if this is PASSIVE FORCE FEEDBACK

- 12. • Rigid screen / moving on space (proprioception) • Screen as a controller Volumetric data visualization & interaction [2006]

- 13. - retro-reflective paper to reflect IR light Technical details: pose estimation markers - used both for pose estimation and control (drag, slice, zoom...) - Off-the-shelf, camera-projector setup

- 14. Future directions… • Fish Tank VR with head tracking • FTIR multitouch • force feedback display?

- 15. Memory block application: the Portable Desk [2013] Classic Jazz Rock

- 16. Application to Neurosciences: twitterBrain [2013~] Multimodal virtual presence of data Extension: portable “memory block” to store personal data (music, books, etc) Room, public space as “virtual bookshelf” • Common virtual support to query academic publications • Real time communication between researchers • Proprioception, spatial memory, procedural memory A shared “physical” database

- 17. Spatialized database (for neurosciences) • “Spatialized” academic database • “Spatialized” social network • big data visualization techniques • “spatialized” sountrack library Augmented Memories [J. Puig, 2012)

- 18. From Deformable workspace to… Deformable User Interface [2014] • Skeuomorph, • Shape and meaning… (beyond the “soap bar smartphone”)

- 19. Haptic interaction with virtual 3d objects “embedded” in the real world… “force field” Virtual Haptic Radar [2008] - Importance of multi-modal immersion (or partial immersion) -

- 20. High Speed Gesture UI for 3d Display (zSpace) [2013] - Importance of instant feedback to feel virtual objects as “real” -

- 21. Multimodal Spatial Augmented Reality (MSAR) …How to generate a sense of co-located presence for this “information layer” beyond projection-mapping? • Projection mapping (image) • Sound, vibration, temperature… • Minimal, zero-delay, zero-mismatch interaction… …Towards a Function Projector capable of projecting “affordances”!

- 22. (b) Interacting with (augmented) objects? Towards a function projector

- 23. Invoked Computing (Function Projector) [2011] “Augmented Reality as the graphic front-end of Ubiquity. And Ubiquity as the killer-app of Sustainability.” Bruce Sterling (Wired Blog on “Invoked Computing”)

- 24. Invoked Computing (video excerpt)

- 25. Visual and Tactile + high speed interaction [2012]

- 26. Physical affordances as services?? rechargeable hammer erasable book ...utopian or dystopian future?

- 27. II. Enabling technologies I. The physical cloud (tangible gigabytes) • Space and objects as scaffold for data • Proprioceptive interfaces / Deformable interfaces • Multi-Modal Augmented Reality II. Enabling technologies (sensing/projection, I/O) • Zero-delay, zero-mismatch spatial AR • Minimal, ubiquitous & context aware interactive displays • Laser displays, pneumatic slow displays, roboptics III. Mediated Self / Augmented Perception /Prosthetics • Augmented sensing & expression • Electronic travel aids, Wearables

- 28. Zero-delay, zero-mismatch for MSAR

- 29. The importance of real time in HCI 高速・低遅延ジェスチャーUI 1000fps 30ms遅延 → 没入感、自己認識の向上による制御性能の向上 (通常ジェスチャーUI 30fps 200ms遅延) Computer or Game

- 30. OmniTouch (Microsoft research) Enabling Technology : Smart Laser Sensing [2003~] • no delay, no misalignment • projection on mobile, deformable surfaces • Real time sensing Vision based Smart sensing vs. Skin Games What? How? • Smart sensing • Laser Sensing Display

- 31. AR surveying (distances, angles, depth...) ubiquitous display medical imaging (IR, polarization...) image enhancement (contrast compensation, color..). Lots of applications demonstrated…

- 32. Camera-less active tracking principle Markerless laser tracking (I/O interface) [2003] 2004 2004

- 34. • artificial synesthesia • real-time interaction • new interfaces for musical expression scoreLight: a human sized pick-up head [2009] (in collaboration with Daito Manabe)

- 35. Skin Games Body as a controller (kinect) & body as a display surface…

- 36. Cameraless interactive display (no calibration needed) Laser GUI: minimal interface [2013~]

- 37. Text projection-mapping at around 200 fps

- 38. Minimal, interactive displays vs. pixelated projection mapping? WORKSHOP at CHI 2013: Steimle J., Benko H., Cassinelli A., Ishii H., Leithinger D., Maes P., Poupyrev I.: Displays Take New Shape: An Agenda for Future Interactive Surfaces. CHI’13 Extended Abstracts on Human Factors in Computing, ACM Press, 2013.

- 39. …minimal displays by no means imply small Laserinne [2009]

- 40. Real-world special effects… …Real world “shader”? Saccade Display + laser sensing [2012~] Why laser? • Stronger persistence of vision effect • Very long distances! (on a car, etc…) example of a “context aware display” [+DIC] video

- 41. Light “pet” • Can sense, measure • Draw, print • Signal (danger), indicate • Play • Robot made of light • Extension of the self • Minimal interactive display

- 42. III. Mediated Self / Augmented Perception I. The physical cloud (tangible gigabytes) • Space and objects as scaffold for data • Proprioceptive interfaces / Deformable interfaces • Multi-Modal Augmented Reality II. Enabling technologies (sensing/projection, I/O) • Zero-delay, zero-mismatch spatial AR • Minimal, ubiquitous & context aware interactive displays • Laser displays, pneumatic slow displays, roboptics III. Mediated Self / Augmented Perception /Prosthetics • Augmented sensing & expression • Electronic travel aids, Wearables

- 43. What is an interface? extension of the Self?

- 44. Haptic Radar for extended spatial awareness [2006] • optical “antennae” for human, not devices • New sensorial modality (this is not TVSS) • Extension of the body, 360 degrees

- 45. Haptic Radar & HaptiKar (video excerpts)

- 46. Ongoing experiments (50 blind people in Brasil) In collaboration with Professor Eliana Sampaio (CNAM, Paris) • Quantitative measures (using a simulator – calibrated magnetic compass, and virtual reality environment) • Production? Presently we are doing: • Qualitative results were extraordinary (semi-structured interviews & ANOVA analysis of anxiety trait/state).

- 47. …work on wearable computing

- 48. Laser Aura: externalizing emotions [2011] Example of a “minimal display” inspired by manga graphical conventions…

- 49. • Real space as an opportunity to organize data (vs. the “cloud”) • Real objects as “handles” to trigger computing functions • The vision: Physical Cloud (computing) Summarizing… • Minimal, context aware displays (less is more!) • Engineered Intuitive physics (generalization of “tangible” interfaces) • Intentional stance (“live” agents instead of control knobs) • Real time, zero-delay, zero-mismatch Spatial AR will produce a paradigm shift making the digital “analog” again… • Enabling technology? • Possible instantiation: the light-pet vs. pixelated screens • an alternative vision to the pixelated screen (including HMD) • avatar robot made of light • communication enhancement (laser aura) • enhanced spatial awareness (signaling, etc)

Editor's Notes

- CV with Publications & Awards [pdf] Youtube channel [alvartube] / Flickr [Pixalvaros] Facebook / SlideShare /Mendeley / @alvarotwit Professional page at Ishikawa-Lab Thinking in the Mi(d)st [Blog] Current affiliation and contact: Assistant Professor / MetaPerception Group / Ishikawa-Oku Lab / The University of Tokyo Tel: +81-3-5841-6937 / Fax: +81-3-5841-6952

- The group I am leading is concerned about Human Computer interfaces, using new technology developed in this group as well as others groups in our lab. We need to develop new technology to enable expand the design dimensions, and enable “new realities”. We need to implement proof-of-principle concepts to demonstrate them. Working in the field of the Media Arts: a space of “free” experimentation, un-controlled environment. Lots of important feedback. LINK: MetaPerception Group

- There are four groups (2014) at the Ishikawa-Watanabe laboratory. The keyword is “high-speed” interaction. Meta-perception group was formerly concerned with “optoelectronics” applications (optical computing, optical networking). Since 2004, the group radically redefined its goals. It is about using technology developed in other groups (AND in this group) for building futuristic prototypes of human-computer interfaces.

- In this presentation I will concentrate mainly on my latest research areas: The “Physical cloud” is about how to interact PHYSICALLY with the digital world. Enabling technologies: corresponds to state-of-the-art technologies used to make these concepts a reality (ie. Virtual data that looks “real”) Mediated Self is basically about wearables, prosthetic and augmented perception. It is related to the above (interfaces to communicate with the “virtual digital cloud”), but it is also about interfaces to enhance human-to-human communication and human-to-world (i.e. augmented perception) interfaces, including assistive technologies.

- Have you noticed? Today’s smartphones look like soap bars.. I am sure this form factor will be a thing of the past very soon. The smartphone (or tablet) is just more of the same: a tiny computer with a rectangular pixilated window (this “window” is an opening to the digital world). Should this be like this? We are naturally capable of telling function from shape, or meaning from place – in the world referential. This is lost in today’s “window” paradigm. Body referential vs. world referential. Is it “wearing” them the solution? (google glass). Perhaps not, because we retain the paradigm of the “squarish, pixelated window” to the virtual world (the screen). What is needed is to “blend” the real and the digital, and manipulate both in a seamless way, using the BODY.

- Example projects: Khronos Projector / Deformable Desktop / Volume Slicing Display & Knowledge Voxels Key concepts: virtual data as a “volume” in space, or a “point” in space proprioception to make the exploration more intuitive the screen (the “window”) should be more than an opening to the digital, but the LOCUS of interaction Virtual and Real world “share” a common frame of reference that is MANTAINED (in front and behind the screen – i.e., there is no a “mouse” in one place, and a “screen” in another (the “membrane…). Tangible interfaces” can be seen as a subset of “physical/reality centered” interfaces.

- THE METAPHORS: 1) space as a knowledge container. 2) “alignement” between real and virtual coordinate frames We are naturally capable of organizing stuff in SPACE, before we categorize them more deeply, semantically speaking. - H. Poincare: to know where something is, is to imagine the series of motions that you need to perform to reach it PROCEDURAL MEMORY vs. declarative memory. How to use procedural memory to “annotate” reality (associate with other things - using something like multimodal virtual tags) HUMAN MEMORY IS DEEPLY SUBJECT TO CONTEXT Use of real space as a “scaffold” for organizing and retrieving information MEMORY BLOCKS: information visualization and visualization analytics TODAY (interactive+loads of data). Ex: genetics.

- http://www.k2.t.u-tokyo.ac.jp/perception/KhronosProjector/index-e.html Same than the Volume Slicing Display, but the screen is FIXED but DEFORMABLE. Created as a media art project, and content is TIME LAPSE PHOTOGRAPHY or VIDEO.

- http://www.k2.t.u-tokyo.ac.jp/members/alvaro/Khronos/

- http://www.k2.t.u-tokyo.ac.jp/perception/DeformableWorkspace/index-e.html Key concept: shared “frame of reference” between real and virtual world the screen is a membrane between the real and the virtual world. a physical attribute makes interaction more precise, even if this is PASSIVE FORCE FEEDBACK

- Now, the screen is rigid, but can move in space: full body proprioception help better understand the content. The screen is still the locus of control (no “mouse” there). http://www.k2.t.u-tokyo.ac.jp/perception/VolumeSlicingDisplay/index-e.html

- Latest concrete application of these ideas: not only superimpose on reality a “virtual object”, but a VIRTUAL SPATIALIZED DATABASE And also a space for “chatting” and exchanging information: a SPATIALIZED SOCIAL NETWORK. http://www.k2.t.u-tokyo.ac.jp/perception/MemoryBlocks/index-e.html

- http://www.k2.t.u-tokyo.ac.jp/perception/MemoryBlocks/index-e.html

- The idea is to cover the smartphone on a silicon case, couple light (from the screen or led) inside it, and use the smartphone camera to classify the patterns of internal reflections (using SVM). Example: sending emoticons based on the physical manipulation of a plastic avatar. http://www.k2.t.u-tokyo.ac.jp/perception/deformableInterface/index-e.html

- An example beyond projection mapping: tactile feedback from virtual objects. This is like “feeling ghosts”, force fields around co-located virtual objects. Details: tracking is done using ultrasound (time differences between pulses). http://www.k2.t.u-tokyo.ac.jp/perception/VirtualHapticRadar/index-e.html

- No space here, but I have been working on media art projects involving lots of wearable technology to “extend” the body, or “MIX” separated bodies into a LARGER PHYSICAL ENTITY (for dancers, experiments on psychology, etc). Examples: scratchBelt: http://www.k2.t.u-tokyo.ac.jp/members/alvaro/scratchBelt/index.html Light Arrays:

- Example of MINIMAL DISPLAYS: cartoon-like graphics http://www.k2.t.u-tokyo.ac.jp/perception/laserAura/index-e.html