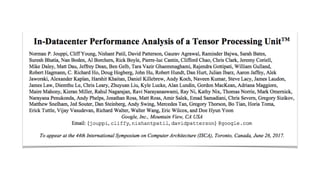

Slides for In-Datacenter Performance Analysis of a Tensor Processing Unit

•

2 j'aime•1,411 vues

The document discusses the motivation for developing the Tensor Processing Unit (TPU), which was that DNN-based workloads were consuming a large and growing portion of datacenter compute resources. It describes how the TPU was developed by Norman Jouppi and others at Google to be much more efficient than CPUs and GPUs for DNN workloads, with up to 80x higher performance per watt. It provides details on the TPU architecture and experimental results showing it significantly outperformed GPUs on latency for DNN inference tasks.

Signaler

Partager

Signaler

Partager

Télécharger pour lire hors ligne

Recommandé

Recommandé

Contenu connexe

Tendances

Tendances (20)

Google jeff dean lessons learned while building infrastructure software at go...

Google jeff dean lessons learned while building infrastructure software at go...

Hadoop World 2011: Advanced HBase Schema Design - Lars George, Cloudera

Hadoop World 2011: Advanced HBase Schema Design - Lars George, Cloudera

Understanding the Value and Architecture of Apache Drill

Understanding the Value and Architecture of Apache Drill

Similaire à Slides for In-Datacenter Performance Analysis of a Tensor Processing Unit

Similaire à Slides for In-Datacenter Performance Analysis of a Tensor Processing Unit (20)

Infrastructure and Tooling - Full Stack Deep Learning

Infrastructure and Tooling - Full Stack Deep Learning

[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan![[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[Hadoop Meetup] Tensorflow on Apache Hadoop YARN - Sunil Govindan

Monitoring of GPU Usage with Tensorflow Models Using Prometheus

Monitoring of GPU Usage with Tensorflow Models Using Prometheus

Deep Learning with Apache Spark and GPUs with Pierce Spitler

Deep Learning with Apache Spark and GPUs with Pierce Spitler

IRJET-A Study on Parallization of Genetic Algorithms on GPUS using CUDA

IRJET-A Study on Parallization of Genetic Algorithms on GPUS using CUDA

Dernier

Call Girl Napur Indira Call Now: 8617697112 Napur Escorts Booking Contact Details WhatsApp Chat: +91-8617697112 Napur Escort Service includes providing maximum physical satisfaction to their clients as well as engaging conversation that keeps your time enjoyable and entertaining. Plus they look fabulously elegant; making an impressionable. Independent Escorts Napur understands the value of confidentiality and discretion - they will go the extra mile to meet your needs. Simply contact them via text messaging or through their online profiles; they'd be more than delighted to accommodate any request or arrange a romantic date or fun-filled night together. We provide –(INDIRA) Call Girl Napur Call Now 8617697112 Napur Escorts 24x7

(INDIRA) Call Girl Napur Call Now 8617697112 Napur Escorts 24x7Call Girls in Nagpur High Profile Call Girls

Model Call Girl Services in Delhi reach out to us at 🔝 9953056974 🔝✔️✔️

Our agency presents a selection of young, charming call girls available for bookings at Oyo Hotels. Experience high-class escort services at pocket-friendly rates, with our female escorts exuding both beauty and a delightful personality, ready to meet your desires. Whether it's Housewives, College girls, Russian girls, Muslim girls, or any other preference, we offer a diverse range of options to cater to your tastes.

We provide both in-call and out-call services for your convenience. Our in-call location in Delhi ensures cleanliness, hygiene, and 100% safety, while our out-call services offer doorstep delivery for added ease.

We value your time and money, hence we kindly request pic collectors, time-passers, and bargain hunters to refrain from contacting us.

Our services feature various packages at competitive rates:

One shot: ₹2000/in-call, ₹5000/out-call

Two shots with one girl: ₹3500/in-call, ₹6000/out-call

Body to body massage with sex: ₹3000/in-call

Full night for one person: ₹7000/in-call, ₹10000/out-call

Full night for more than 1 person: Contact us at 🔝 9953056974 🔝. for details

Operating 24/7, we serve various locations in Delhi, including Green Park, Lajpat Nagar, Saket, and Hauz Khas near metro stations.

For premium call girl services in Delhi 🔝 9953056974 🔝. Thank you for considering us!CHEAP Call Girls in Hauz Quazi (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Hauz Quazi (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE9953056974 Low Rate Call Girls In Saket, Delhi NCR

Model Call Girl Services in Delhi reach out to us at 🔝 9953056974 🔝✔️✔️

Our agency presents a selection of young, charming call girls available for bookings at Oyo Hotels. Experience high-class escort services at pocket-friendly rates, with our female escorts exuding both beauty and a delightful personality, ready to meet your desires. Whether it's Housewives, College girls, Russian girls, Muslim girls, or any other preference, we offer a diverse range of options to cater to your tastes.

We provide both in-call and out-call services for your convenience. Our in-call location in Delhi ensures cleanliness, hygiene, and 100% safety, while our out-call services offer doorstep delivery for added ease.

We value your time and money, hence we kindly request pic collectors, time-passers, and bargain hunters to refrain from contacting us.

Our services feature various packages at competitive rates:

One shot: ₹2000/in-call, ₹5000/out-call

Two shots with one girl: ₹3500/in-call, ₹6000/out-call

Body to body massage with sex: ₹3000/in-call

Full night for one person: ₹7000/in-call, ₹10000/out-call

Full night for more than 1 person: Contact us at 🔝 9953056974 🔝. for details

Operating 24/7, we serve various locations in Delhi, including Green Park, Lajpat Nagar, Saket, and Hauz Khas near metro stations.

For premium call girl services in Delhi 🔝 9953056974 🔝. Thank you for considering us!CHEAP Call Girls in Ashok Nagar (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Ashok Nagar (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE9953056974 Low Rate Call Girls In Saket, Delhi NCR

Top Rated Pune Call Girls Shirwal ⟟ 6297143586 ⟟ Call Me For Genuine Sex Service At Affordable Rate

Booking Contact Details

WhatsApp Chat: +91-6297143586

pune Escort Service includes providing maximum physical satisfaction to their clients as well as engaging conversation that keeps your time enjoyable and entertaining. Plus they look fabulously elegant; making an impressionable.

Independent Escorts pune understands the value of confidentiality and discretion - they will go the extra mile to meet your needs. Simply contact them via text messaging or through their online profiles; they'd be more than delighted to accommodate any request or arrange a romantic date or fun-filled night together.

We provide -

01-may-2024(v.n)

Top Rated Pune Call Girls Shirwal ⟟ 6297143586 ⟟ Call Me For Genuine Sex Ser...

Top Rated Pune Call Girls Shirwal ⟟ 6297143586 ⟟ Call Me For Genuine Sex Ser...Call Girls in Nagpur High Profile

↑Top celebrity ( Pune ) Nagerbazar Call Girls8250192130 unlimited shot and all type sex allow high profile girls

Booking Contact Details

WhatsApp Chat: +91-8250192130

pune Escort Service includes providing maximum physical satisfaction to their clients as well as engaging conversation that keeps your time enjoyable and entertaining. Plus they look fabulously elegant; making an impressionable.

Independent Escorts pune understands the value of confidentiality and discretion - they will go the extra mile to meet your needs. Simply contact them via text messaging or through their online profiles; they'd be more than delighted to accommodate any request or arrange a romantic date or fun-filled night together.

We provide -

29-april-2024(v.n)

↑Top celebrity ( Pune ) Nagerbazar Call Girls8250192130 unlimited shot and al...

↑Top celebrity ( Pune ) Nagerbazar Call Girls8250192130 unlimited shot and al...Call Girls in Nagpur High Profile

Top Rated Pune Call Girls Chakan ⟟ 6297143586 ⟟ Call Me For Genuine Sex Service At Affordable Rate

Booking Contact Details

WhatsApp Chat: +91-6297143586

pune Escort Service includes providing maximum physical satisfaction to their clients as well as engaging conversation that keeps your time enjoyable and entertaining. Plus they look fabulously elegant; making an impressionable.

Independent Escorts pune understands the value of confidentiality and discretion - they will go the extra mile to meet your needs. Simply contact them via text messaging or through their online profiles; they'd be more than delighted to accommodate any request or arrange a romantic date or fun-filled night together.

We provide -

01-may-2024(v.n)

Top Rated Pune Call Girls Chakan ⟟ 6297143586 ⟟ Call Me For Genuine Sex Serv...

Top Rated Pune Call Girls Chakan ⟟ 6297143586 ⟟ Call Me For Genuine Sex Serv...Call Girls in Nagpur High Profile

Dernier (20)

Get Premium Pimple Saudagar Call Girls (8005736733) 24x7 Rate 15999 with A/c ...

Get Premium Pimple Saudagar Call Girls (8005736733) 24x7 Rate 15999 with A/c ...

(INDIRA) Call Girl Napur Call Now 8617697112 Napur Escorts 24x7

(INDIRA) Call Girl Napur Call Now 8617697112 Napur Escorts 24x7

CHEAP Call Girls in Hauz Quazi (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Hauz Quazi (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

Call Girls Pimple Saudagar Call Me 7737669865 Budget Friendly No Advance Booking

Call Girls Pimple Saudagar Call Me 7737669865 Budget Friendly No Advance Booking

CHEAP Call Girls in Ashok Nagar (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Ashok Nagar (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

NO1 Verified Amil Baba In Karachi Kala Jadu In Karachi Amil baba In Karachi A...

NO1 Verified Amil Baba In Karachi Kala Jadu In Karachi Amil baba In Karachi A...

Escorts Service Arekere ☎ 7737669865☎ Book Your One night Stand (Bangalore)

Escorts Service Arekere ☎ 7737669865☎ Book Your One night Stand (Bangalore)

Top Rated Pune Call Girls Shirwal ⟟ 6297143586 ⟟ Call Me For Genuine Sex Ser...

Top Rated Pune Call Girls Shirwal ⟟ 6297143586 ⟟ Call Me For Genuine Sex Ser...

↑Top celebrity ( Pune ) Nagerbazar Call Girls8250192130 unlimited shot and al...

↑Top celebrity ( Pune ) Nagerbazar Call Girls8250192130 unlimited shot and al...

9004554577, Get Adorable Call Girls service. Book call girls & escort service...

9004554577, Get Adorable Call Girls service. Book call girls & escort service...

Kothanur Call Girls Service: 🍓 7737669865 🍓 High Profile Model Escorts | Bang...

Kothanur Call Girls Service: 🍓 7737669865 🍓 High Profile Model Escorts | Bang...

CALL GIRLS IN Saket 83778-77756 | Escort Service In DELHI NcR

CALL GIRLS IN Saket 83778-77756 | Escort Service In DELHI NcR

Vip Mumbai Call Girls Andheri East Call On 9920725232 With Body to body massa...

Vip Mumbai Call Girls Andheri East Call On 9920725232 With Body to body massa...

Top Rated Pune Call Girls Chakan ⟟ 6297143586 ⟟ Call Me For Genuine Sex Serv...

Top Rated Pune Call Girls Chakan ⟟ 6297143586 ⟟ Call Me For Genuine Sex Serv...

Slides for In-Datacenter Performance Analysis of a Tensor Processing Unit

- 2. Motivation for TPU • 2006: “Just run DNNs on our CPU datacenter. It’s basically free.” • 2013: “3 minutes of DNN-based voice search == 2x more datacenter compute.”

- 3. The Players Norman P. Jouppi and his two musketeers. David Patterson 70+ other Google engineers

- 4. • 30-80x TOPS/watt vs. 2015 CPUs and GPUs. • 8 GiB DRAM. • 8-bit fixed point. • 256x256 MAC unit. • Support for data reordering, matrix multiply, activation, pooling, and normalization. Tensor Processing Unit (TPU)

- 5. “The unexpected desire for TPUs by many Google services combined with the preference for low response time changed the equation, with application writers often opting for reduced latency over waiting for bigger batches to accumulate.” Application Testbed

- 6. TPU Block Diagram & Floor Plan

- 7. Experimental Testbed 8x K80 GPUs

- 9. Rooflines of TPU with DNN Apps

- 10. App breakdown by Performance Counters

- 12. Programming the TPU Programming FPGAs TensorFlow graph TPU host instructions TPU bitstream

- 13. NVIDIA’s Rebuttal to the TPU https://blogs.nvidia.com/blog/2017/04/10/ai-drives-rise-accelerated-computing-datacenter/

- 14. “Patterson” Discussion 1. Fallacy: NN inference applications in data centers value throughput as much as response time. 2. Fallacy: The K80 GPU architecture is a good match to NN inference. 3. Pitfall: Architects have neglected important NN tasks. 4. Pitfall: For NN hardware, Inferences Per Second (IPS) is an inaccurate summary performance metric. 5. Fallacy: The K80 GPU results would be much better if Boost mode were enabled. 6. Fallacy: CPU and GPU results would be comparable to the TPU if we used them more efficiently or compared to newer versions. 7. Pitfall: Performance counters added as an afterthought for NN hardware. 8. Fallacy: After two years of software tuning, the only path left to increase TPU performance is hardware upgrades.

- 15. “CNNs constitute only about 5% of the representative NN workload for Google. More attention should be paid to MLPs and LSTMs. Repeating history, it’s similar to when many architects concentrated on floating- point performance when most mainstream workloads turned out to be dominated by integer operations.” Interesting quote