Stochastic Processes - part 5

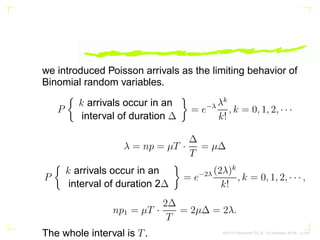

- 1. we introduced Poisson arrivals as the limiting behavior of Binomial random variables. P k arrivals occur in an interval of duration ∆ = e−λ λk k! , k = 0, 1, 2, · · · λ = np = µT · ∆ T = µ∆ P k arrivals occur in an interval of duration 2∆ = e−2λ (2λ)k k! , k = 0, 1, 2, · · · , np1 = µT · 2∆ T = 2µ∆ = 2λ. The whole interval is T. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.1/63

- 2. Poisson arrivals over an interval form a Poisson random variable whose parameter depends on the duration of that interval. Moreover because of the Bernoulli nature of the underlying basic random arrivals, events over nonoverlap- ping intervals are independent. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.2/63

- 3. We shall use these two key observations to define a Poisson process formally. X(t) = n(0, t) represents a Poisson process if (i) the number of arrivals n(t1, t2) in an interval (t1, t2) of length t = t2 − t1 is a Poisson random variable with parameter λt P{n(t1, t2) = k} = e−λt (λt)k k! , k = 0, 1, 2, · · · , t = t2 − t1 AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.3/63

- 4. If the intervals (t1, t2) and (t3, t4) are nonoverlapping, then the random variables n(t1, t2) and n(t3, t4) are independent. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.4/63

- 5. Since n(0, t) = P(λt) E[X(t)] = E[n(0, t)] = λt E[X2 (t)] = E[n2 (0, t)] = λt + λ2 t2 . RXX(t1, t2) = λ2 t1t2 + λmin(t1, t2). AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.5/63

- 6. the Poisson process X(t) does not represent a WSS pro- cess. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.6/63

- 7. Define a binary level process Y (t) = (−1)X(t) that represents a telegraph signal. Notice that the transition instants {ti} are random. Although X(t) does not represent a WSS process, its derivative X′ (t) does represent a WSS process. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.7/63

- 8. µX′ (t) = dµX(t) dt = dλt dt = λ, a constant RXX′ (t1, t2) = ∂RXX (t1,t2) ∂t2 = λ2 t1t1 ≤ t2 λ2 t1 + λt1 t2 = λ2 t1 + λU(t1 − t2) RX′X′ (t1, t2) = ∂RXX′ (t1, t2) ∂t1 = λ2 + λδ(t1 − t2). it follows that X′ (t) is a WSS process. Thus nonstationary inputs to linear systems can lead to WSS outputs. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.8/63

- 9. Sum of Poisson Processes: If X1(t) and X2(t) represent two independent Poisson processes, then their sum X1(t) + X2(t) is also a Poisson process with parameter (λ1 + λ2)t. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.9/63

- 10. Random selection of Poisson Points: Let t1, t2, · · · , ti, · · · represent random arrival points associated with a Poisson process X(t) with parameter λt and associated with each arrival point, define an independent Bernoulli random variable Ni, where P(Ni = 1) = p, P(Ni = 0) = q = 1 − p. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.10/63

- 11. Y (t) = X(t) X i=1 Ni;Z(t) = X(t) X i=1 (1 − Ni) = X(t) − Y (t) ⇒ both Y (t) and Z(t) are independent Poisson processes with parameters λpt and λqt respectively AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.11/63

- 12. Proof: Y (t) = ∞ X n=k P{Y (t) = k|X(t) = n}P{X(t) = n)}. But given X(t) = n, we have Y (t) = n X i=1 Ni ∼ B(n, p) so that P{Y (t) = k|X(t) = n} = n k pk qn−k , 0 ≤ k ≤ n, AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.12/63

- 13. P{X(t) = n} = e−λt (λt)n n! . by substituting, we get P{Y (t) = k} = e−λt ∞ X n=k n! (n−k)!k! pk qn−k (λt)n n! = pk e−λt k! (λt)k ∞ X n=k (qλt)n−k (n−k)! | {z } exp(qλt) = (λpt)k e−(1−q)λt k! = e−λpt (λpt)k k! , k = 0, 1, 2, · · · AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.13/63

- 14. More generally, P{Y (t) = k, Z(t) = m} = P{Y (t) = k, X(t) − Y (t) = m} = P{Y (t) = k, X(t) = k + m} = P{Y (t) = k|X(t) = k + m}P{X(t) = k + m} = k+m k pk qm · e−λt (λt)k+m (k + m)! = e−λpt (λpt)n k! | {z } P (Y (t)=k) e−λqt (λqt)n m! | {z } P (Z(t)=m) = P{Y (t) = k}P{Z(t) = m}, AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.14/63

- 15. Thus random selection of Poisson points preserve the Pois- son nature of the resulting processes. However, determin- istic selection from a Poisson process destroys the Poisson property for the resulting processes. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.15/63

- 16. Inter-arrival Distribution for Poisson Processes If τ1 is to denote the time interval (delay) to the first arrival from any fixed point t0. Fτ1 (t) = P{τ1 ≤ t} = P{X(t) 0} = P{n(t0, t0 + t) 0} = 1 − P{n(t0, t0 + t) = 0} = 1 − e−λt fτ1 (t) = dFτ1 (t) dt = λe−λt , t ≥ 0 τ1 is an exponential random variable with parameter λ so that E{τ1} = 1/λ AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.16/63

- 17. Similarly, let tn represent the nth random arrival point for a Poisson process. Ftn (t) = P{tn ≤ t} = P{X(t) ≥ n} = 1 − P{X(t) n} = 1 − n−1 X k=0 (λt)k k! e−λt ⇒ ftn (x) = dFtn (x) dx = − n−1 X k=1 λ(λx)k−1 (k − 1)! e−λx + n−1 X k=0 λ(λx)k k! e−λx = λn xn−1 (n − 1)! e−λx , x ≥ 0 AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.17/63

- 18. which represents a gamma density function . i.e., the waiting time to the nth Poisson arrival instant has a gamma distribution. Moreover tn = n X i=1 τi where τi is the random inter-arrival duration between the (i1)th and ith events. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.18/63

- 19. Hence using their characteristic functions, it follows that all inter-arrival durations of a Poisson process are independent exponential random variables with common parameter λ fτi (t) = λe−λt , t ≥ 0. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.19/63

- 20. Thus if we systematically tag every mth outcome of a Poisson process X(t) with parameter λt to generate a new process e(t), then the inter-arrival time between any two events of e(t) is a gamma random variable. E[e(t)] = m/λ, and if λ = mµ, then E[e(t)] = 1/µ. The inter-arrival time of e(t) in that case represents an Erlang-m random variable, and e(t) an Erlang-m process. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.20/63

- 21. In summary, if Poisson arrivals are randomly redirected to form new queues, then each such queue generates a new Poisson process. However if the arrivals are systemati- cally redirected 1st arrival to 1st counter, 2nd arrival to 2nd counter, mth to mth , (m + 1)th, then the new subqueues form Erlang-m processes. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.21/63

- 22. Poisson Departures between Exponential Inter-arrivals If X(t) ∼ P(λt) and Y (t) ∼ P(µt) represent two indepen- dent Poisson processes called arrival and departure pro- cesses. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.22/63

- 23. Z represent the random interval between any two successive arrivals of X(t). Z has an exponential distribution with parameter λ Let N represent the number of “departures” of Y (t) between any two successive arrivals of X(t). Then from the Poisson nature of the departures we have P{N = k|Z = t} = e−µt (µt)k k! . AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.23/63

- 24. P{N = k} = Z ∞ 0 P{N = k|Z = t}fZ(t)dt = Z ∞ 0 e−µt (µt)k k! λe−λt dt = λ k! Z ∞ 0 (µt)k e−(λ+µ)t dt = λ λ + µ µ λ + µ k 1 k! Z ∞ 0 xk e−x dx | {z } k! = λ λ + µ µ λ + µ k , k = 0, AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.24/63

- 25. the random variable N has a geometric distribution. Thus if customers come in and get out according to two indepen- dent Poisson processes at a counter, then the number of arrivals between any two departures has a geometric dis- tribution. Similarly the number of departures between any two arrivals also represents another geometric distribution. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.25/63

- 26. Suppose a cereal manufacturer inserts a sample of one type of coupon randomly into each cereal box. Suppose there are n such distinct types of coupons. One interesting question is that how many boxes of cereal should one buy on the average in order to collect at least one coupon of each kind? AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.26/63

- 27. Stopping Times Let X1(t), X2(t), · · · , Xn(t) represent n independent identi- cally distributed Poisson processes with common parame- ter λt Let ti1, ti2, · · · represent the first, second, random ar- rival instants of the process Xi(t), i = 1, 2, · · ·. They will correspond to the first, second, appearance of the ith type coupon in the above problem. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.27/63

- 28. X(t) = n X i=1 Xi(t), so that the sum X(t) is also a Poisson process with parameter µt µ = nλ AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.28/63

- 29. 1/λ represents The average inter-arrival duration between any two arrivals of Xi(t), i = 1, 2, · · · whereas 1/µ repre- sents the average inter-arrival time for the combined sum process X(t) AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.29/63

- 30. the stopping time T to be that random time instant by which at least one arrival of X1(t), X2(t), · · · , Xn(t) has occurred. T = max (t11, t21, · · · , ti1, · · · , tn1). AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.30/63

- 31. ti1, i = 1, 2, · · · , n are independent exponential random variables with common parameter λ. This gives FT (t) = P{T ≤ t} = P{max (t11, t21, · · · , tn1) ≤ t} = P{t11 ≤ t, t21 ≤ t, · · · , tn1 ≤ t} = P{t11 ≤ t}P{t21 ≤ t} · · · P{tn1 ≤ t} = [Fti(t)]n . FT (t) = (1 − e−λt )n , t ≥ 0 represents the probability distribution function of the stopping time random variable T. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.31/63

- 32. P(T t) = 1 − FT (t) = 1 − (1 − e−λt )n , t ≥ 0 To compute its mean E{T} = Z ∞ 0 P(T t)dt = Z ∞ 0 {1 − (1 − e−λt )n }dt. 1 − e−λt = x, AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.32/63

- 33. E{T} = 1 λ Z 1 0 (1 − xn ) dx 1 − x = 1 λ Z 1 0 1 − xn 1 − x dx = 1 λ Z 1 0 (1 + x + x2 + · · · + xn−1 )dx = 1 λ n X k=1 xk k

- 37. 1 0 E{T} = 1 λ 1 + 1 2 + 1 3 + · · · + 1 n ≃ 1 µ n(ln n + γ) where γ ≃ 0.5772157 · · · is the Euler’s constant. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.33/63

- 38. Then we obtain the key relation T = N X i=1 Yi E{T|N = n} = E{ n X i=1 Yi|N = n} = E{ n X i=1 Yi} = nE{Yi} since {Yi} and N are independent random variables, and hence E{T} = E[E{T|N = n}] = E{N}E{Yi} AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.34/63

- 39. Because Yi ∼ exp(µ) ⇒ E{Yi} = 1/µ E{N} ≃ n(ln n + γ). Thus on the average a customer should buy about n ln n or slightly more, boxes to guarantee that at least one coupon of each type has been collected. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.35/63

- 40. Next, consider a slight generalization to the above problem: What if two kinds of coupons (each of n type) are mixed up, and the objective is to collect one complete set of coupons of either kind? AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.36/63

- 41. Let Xi(t) and Yi(t), i = 1, 2, · · · represent the two kinds of coupons (independent Poisson processes) that have been mixed up to form a single Poisson process Z(t) with normalized parameter unity. Z(t) = n X i=1 [Xi(t) + Yi(t)] ∼ P(t). AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.37/63

- 42. As before let ti1, ti2, · · · represent the first, second, arrivals of the process Xi(t), and τi1, τi2, · · · represent the first, second, arrivals of the process Yi(t), i = 1, 2, · · · , n. The stopping time T1 in this case represents that random instant at which either all X - type or all Y - type have occurred at least once AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.38/63

- 43. T1 = min{X, Y } X = max (t11, t21, · · · , tn1) Y = max (τ11, τ21, · · · , τn1). the random variables X and Y have the same distribution with λ replaced by 1/2n (since µ = 1 and there are 2n independent and identical processes as before), and hence FX(t) = FY (t) = (1 − e−t/2n )n , t ≥ 0. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.39/63

- 44. FT1 (t) = P(T1 ≤ t) = P(min{X, Y } ≤ t) = 1 − P(min{X, Y } t) = 1 − P(X t, Y t) = 1 − P(X t)P(Y t) = 1 − (1 − FX(t))(1 − FY (t)) = 1 − {1 − (1 − e−t/2n )n }2 , t ≥ 0 to be the probability distribution function of the new stopping time T1. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.40/63

- 45. E{T1} = Z ∞ 0 P(T1 t)dt = Z ∞ 0 {1 − FT1 (t)}dt = Z ∞ 0 {1 − (1 − e−t/2n )n }2 dt. 1 − e−t/2n = x, or 1 2n e−t/2n dt = dx, dt = 2ndx 1−x . AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.41/63

- 46. E{T1} = 2n Z 1 0 (1 − xn )2 dx 1 − x = 2n Z 1 0 1 − xn 1 − x (1 − xn )dx = 2n Z 1 0 (1 + x + x2 + · · · + xn−1 )(1 − xn )dx = 2n Z 1 0 ( n−1 X k=0 xk − n−1 X k=0 xn+k )dx = 2n 1 + 1 2 + 1 3 + · · · + 1 n − 1 n + 1 + 1 n + 2 + · · · + 1 2n = 2n(ln(n/2) + γ). AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.42/63

- 47. the total number of random arrivals N up to T1 is related where Yi ∼Exponential(1), E{N} = E{T1} ≃ 2n(ln(n/2) + γ). AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.43/63

- 48. We can generalize the stopping times in yet another way: Poisson Quotas: X(t) = n X i=1 Xi(t) ∼ P(µt) AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.44/63

- 49. The stopping time T in this case is that random time instant at which any r processes have met their quota requirement where is r 6 n given. The problem is to determine the probability density function of the stopping time random variable T, and determine the mean and variance of the total number of random arrivals N up to the stopping time T. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.45/63

- 50. Solution: ti1, ti2, · · · represent the first, second, arrivals of the ith process Xi(t), and define Yij = tij − ti,j−1 the inter-arrival times Yjj and independent, exponential random variables with parameter λi and hence ti,mi = mi X j=1 Yij ∼ Gamma(mi, λi) AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.46/63

- 51. where Xi(t) are independent, identically distributed Pois- son processes with common parameter λit, µ = λ1 + · · · + λn, integers m1, m2, · · · , mn represent the preas- signed number of arrivals (quotas) required for processes X1(t), X2(t), · · · , Xn(t) in the sense that when mi arrivals of the process Xi(t) have occurred, the process Xi(t) satisfies its quota requirement. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.47/63

- 52. Ti to the stopping time for the ith process; i.e., the occurrence of the mith arrival equals Ti. Thus Ti = ti,mi ∼ Gamma(mi, λi), i = 1, 2, · · · , n fTi (t) = tmi−1 (mi − 1)! λmi i e−λit , t ≥ 0. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.48/63

- 53. Since the n processes are independent, the associated stopping times Ti, i = 1, 2, · · · , n are also independent random variables, a key observation. Given independent gamma random variables T1, T2, · · · , Tn we form their order statistics: This gives T(1) T(2) · · · T(r) · · · T(n). The desired stopping time T when r processes have satisfied their quota requirement is given by the rth order statistics T(r). T = T(r) AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.49/63

- 54. The density function of the stopping time random variable T where r types of arrival quota requirements have been satisfied. fT (t) = n! (r − 1)!(n − r)! Fk−1 Ti (t)[1 − FTi (t)]n−k fTi (t) Integrating by parts from fTi (t), we have FTi (t) = 1 − mi−1 X k=0 (λit)k k! e−λit , t 0. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.50/63

- 55. If N represents the total number of all random arrivals up to T, T = N X i=1 Yi where Yi are the inter-arrival intervals for the process X(t), and hence E{T} = E{N}E{Yi} = 1 µ E{N} with normalized mean value µ = 1 for the sum process X(t), we get E{N} = E{T}. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.51/63

- 56. To relate the higher order moments of N and T we can use their characteristic functions. E{ejωT } = E{e jω N P i=1 Yi } = E{E[e jω n P i=1 Yi |N = n] | {z } [E{ejωYi }]n } = E[{E{ejωYi }|N = n}n ]. But Y i ∼Exponential(1) and independent of N so that E{ejωYi |N = n} = E{ejωYi } = 1 1 − jω AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.52/63

- 57. E{ejωT } = ∞ X n=0 [E{ejωYi }]n P(N = n) =E 1 1−jω N = E{(1−jω)−N } ∞ X k=0 (jω)k k! E{Tk } = ∞ X k=0 (jω)k k! E{N(N + 1) · · · (N + k − 1)} E{Tk } = E{N(N + 1) · · · (N + k − 1)}, var{N} = var{T} − E{T}. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.53/63

- 58. In probability theory, a (discrete-time) martingale is a discrete-time stochastic process (i.e., a sequence of random variables) X1, X2, X3, · · · that satisfies the identity E(Xn+1|X1, · · · , Xn) = Xn i.e., the conditional expected value of the next observation, given all of the past observations, is equal to the last ob- servation. As is frequent in probability theory, the term was adopted from the language of gambling. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.54/63

- 59. Somewhat more generally, a sequence Y1, Y2, Y3, · · · is said to be a martingale with respect to another sequence X1, X2, X3, · · · if E(Yn+1|X1, · · · , Xn) = Yn, ∀n AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.55/63

- 60. A continuous-time martingale is a zero-drift stochastic process. That is, a random variable θ follows a continuous-time martingale iff dθ = σdz where dz is a Wiener process and the variable σ is a constant or a stochastic process that may depend on θ or other stochastic variables. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.56/63

- 61. Originally, martingale referred to a class of betting strate- gies popular in 18th century France. The simplest of these strategies was designed for a game in which the gambler wins his stake if a coin comes up heads and loses it if the coin comes up tails. The strategy had the gambler double his bet after every loss, so that the first win would recover all previous losses plus win a profit equal to the original stake. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.57/63

- 62. Since as a gambler’s wealth and available time jointly ap- proach infinity his probability of eventually flipping heads ap- proaches 1, the martingale betting strategy was seen as a sure thing by those who practiced it. Of course in reality the exponential growth of the bets would quickly bankrupt those foolish enough to use the martingale after even a moder- ately long run of bad luck. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.58/63

- 63. The concept of martingale in probability theory was intro- duced by Paul Pierre Lévy, and much of the original devel- opment of the theory was done by Joseph Leo Doob. Part of the motivation for that work was to show the impossibility of successful betting strategies. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.59/63

- 64. Extinction Probability for Queues: A customer arrives at an empty server and immediately goes for service initiating a busy period. During that ser- vice period, other customers may arrive and if so they wait for service. The server continues to be busy till the last wait- ing customer completes service which indicates the end of a busy period. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.60/63

- 65. An interesting question is whether the busy periods are bound to terminate at some point? Are they? Do busy periods continue forever? Or do such queues come to an end sooner or later? If so, show? AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.61/63

- 66. Slow Traffic (ρ 6 1) Steady state solutions exist and the probability of extinction equals 1. (Busy periods are bound to terminate with proba- bility 1. AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.62/63

- 67. Heavy Traffic (ρ 1) Steady state solutions do not exist, and such queues can be characterized by their probability of extinction. Steady state solutions exist if the traffic rate ρ 1 Thus pk = lim n→∞ P{X(nT) = k}, exists if ρ 1 What if too many customers rush in, and/or the service rate is slow (ρ 1)? How to characterize such queues? AKU-EE/Stochastic/HA, 1st Semester, 85-86 – p.63/63