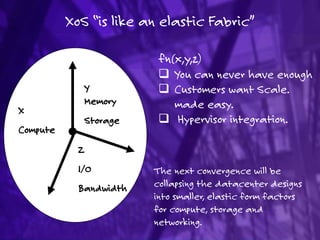

12.) fabric (your next data center)

- 1. XoS “is like an elastic Fabric” Z I/O Bandwidth Y Memory Storage X Compute fn(x,y,z) You can never have enough Customers want Scale. made easy. Hypervisor integration. The next convergence will be collapsing the datacenter designs into smaller, elastic form factors for compute, storage and networking.

- 2. Open Source Software - The Beginning “Hello everybody out there using minix — I’m doing a (free) operating system (just a hobby, won’t be big and professional like gnu) for 386(486) AT clones..” Free Unix! “Starting this Thanksgiving I am going to write a complete Unix- compatible software system called GNU (for Gnu's Not Unix), and give it away free(1) to everyone who can use it. Contributions of time, money, programs and equipment are greatly needed”.

- 3. Software Timeline Closed Hybrid Open 1970s-80s 1980s-90s 1990s-Present

- 4. Evolution of the Data Center 1970s 1990s 2000’s Mainframe Scale Up Scale Out Proprietary Proprietary Proprietar y 2011++ Open Source

- 5. Evolution of the Cloud 1998 2003 2006 2008 2009 Virtualization Devops IAAS / CLOUD Proprietary Open Source Proprietary 2008 2009 2010 Open Core Open Source

- 6. Open Compute • Virt IO • Hardware Management • Data Center Design • Open Rack • Storage 5 Main Verticals – All Open Source What is it? Who is it? Why does it exist? To democratize hardware and eliminate gartuitous differentiation allowing for standardization across tier 1’s and ODMs.

- 7. Is your network faster today than it was 3 years ago? Folded: Merge input and output in to one switch = 1.1 Strict-sense non blocking Clos networks (m ≥ 2n−1): the original 1953 Clos result. 1.2 Rearrange ably non blocking Clos networks (m ≥ n). 1.3 Blocking probabilities: the Lee and Jacobaeus approximations. Multi-stage circuit switching network proposed by Charles Clos in 1953 for telephone switching systems. Allows forming a large switch from smaller switches… Fat-tree (Blocking characteristics) Clos networks

- 8. BigData IPStorage VM Farms Cloud Web 2.0 Legacy VDI It should be… 2-3 Generations of Silicon 1G -> 100G Speed Transition Lowest Latency with 2 tier Leaf/Spine

- 9. Data Center challenges & needs Containing the Failure Domain No downtime – planned or unplanned High bandwidth Automate provisioning, change control and upgrades Supports all use cases and applications Client-server Modern distributed apps, Big Data Storage, Virtualization Any hardware with Any Switch Silicon Any OS Driver Routing AnalyticsVirtual Policy Platform Config Dis-aggregated Switch

- 10. Data Center challenges & needs Multi-tenancy with Integrated Security Low and predictable latency Mix and match multiple generations of technologies Fabric Modules (Spine) I/OModules(Leaf) Spine 3 µsec Latency Leaf Network Disaggregation common Goals

- 11. Chassis V SplineFabric Modules (Spine) I/OModules(Leaf) Spine Leaf Chassis ≠ Can not access Line cards. ≠ No L2/l3 recovery inside. ≠ No access to Fabric. Fabric Control Top-of-Rack Switches Advance L2/L3 protocols inside the Spline Full access to Spine Switches

- 12. Fully Non-blocking Architecture Three key Components to Fabric Design… Spine - 10 Gb Spine switches (10Gb - 40Gb - 100Gb Spine ) verylow Oversubscription Leaf - 1/10 Gb Non-blocking (wirespeed) leaf switches Inter- rack latency: 2 to 5 microseconds Compute and Storage - High performance hosts (VMfarms, IP Storage, Video Streaming, HPC, Low-latency trading) 3 µsec Chassis V 1.8 µsec Network Fabric O/S Maximum 10GbE Connections Total Summit X770 Units 40 GbE Ports Downlinks at the Edge 40 GbE Ports Uplinks at the Edge 1:1 256 6 16 16 ~2: 1 512 8 21 11 3:1 768 10 24 8 80% Traffic East-West

- 13. Facebook Switch Fabrics The Facebook design Advantages: No dependency on the underlying physical network and protocols. No addresses of virtual machines are present in Ethernet switches, resulting in smaller MAC tables and less complex STP layouts No limitations related to the Virtual LAN (VLAN) technology, resulting in more than 16 million possible separate networks, compared to the VLAN's limit of 4,000 No dependency on the IP multicast traffic

- 14. BusinessValue StrategicAsset Ethernet Fabric Single software train Purposed OS for Broadcom ASIcs Modularity = High Availability Network Operating System Design once, leverage everywhere. Why?Why?How?

- 15. Considerations 80% North-South Traffic Oversubscription : from upto 200:1 Inter-rack latency: 75 to 150 microseconds Scale: Up to 20 racks Client Request +Server Response = 20% traffic Lookup Storage = 80% traffic (inter- rack) Non - blocking 2 tier designs optimal Compute Cache Database Storage Client Response

- 16. Simple Spine(Active – Active) LAG MLAG No Loop Just more bandwidth! LAG Summit SummitSummit Active / Active Simplifies or eliminates the Spanning Tree topology Simple to understand and easy to engineer traffic Scale using standard L2/L3 Protocols (LACP, OSPF, BGP) Total Latency Under 5us L2 L3 L2 L3 L2 L3

- 17. Simple Fabric LAG No Loop Just more bandwidth! LAG Summit Active / Active Topology Independent ISSU Plug and Play Provisioning spines and leaves L2 L3 L2 L3 L2 L3

- 18. Start Small; Scale as You Grow Cluster Leaf Spine Cluster Cluster Simply add a Extreme Leaf Clusters… Each cluster is in an independent cluster that includes servers, storage, database & interconnects Each cluster can be used for a different type of service. Simple repeatable design capacity can be added as a commodity. Ingress Egress Scale

- 19. Intel, Facebook, OCP, and Disaggregation 4-Post Architecture at Facebook - Each rack switch (RSW) has up to 48 10G downlinks and 4-8 10G uplinks (10:1 oversubscription) to cluster switch (CSW) Each CSW has 4 40G uplinks – one to each of the 4 FatCat (FC) aggregation switches (4:1 oversubscription) 4 CSW’s are connected in a 10G×8 protection ring 4FC’s are connected in a 10G×16 protection ring No routers at FC. One CSW failure reduces intra-cluster capacity to 75%. Dense 10GbE Interconnect using breakout cables, Copper or Fiber “Wedge” “6-pack”

- 20. The Open Source Data Center The current rack standard has no specification for depth or height. The only “standard” is the requirement for it to be 19 inches wide. This standard evolved out of the railroad switching era. What is it? 5 projects – all completely open to lower cost and increase data center efficiency Why does it exist? To democratize hardware and eliminate gartuitous differentiation allowing for standardization across tier 1’s and ODMs.

- 21. One RACK DesignTop of Rack Switches Servers Storage Summit Management Switch Open Compute because it might allow companies to purchase "vanity free" You also don't have stranded ports with a spline network. Scale beyond traditional data center design, Modular datacenter construction is the future. Outdated design only supports low density IT computing Time consuming maintenance Long lead time to deploy additional data center capacity Loosely coupled Nearly coupled Closely coupled The monolithic datacenter is dead. Summit Summit Shared Combo Ports 4x10GBASE-T & 4xSFP+ 100Mb/1Gb/10GBASE-T

- 22. Two RACK Design Reduce OPEX leverage a repeatable solution from planning, configuration, installation, commissioning , full turnkey deployments and maintenance Leverage best in class servers, storage, networking and services and is uniquely positioned to create efficient, high performance modular data centers with the infrastructure to support IT Flexible solution from planning, configuration, installation, commissioning , full turnkey deployments and maintenance Summit Top of Rack Switches Servers Storage Summit Management Switch Summit Top of Rack Switches Servers Storage Summit Management Switch Summit Summit Summit Summit

- 23. Eight RACK PoD Design Summit Summit Spine Leaf Summit Top of Rack Switches Servers Storage Summit Manage ment Top of Rack Switches Servers Storage Summit Manage ment Summit Summit Summit Summit Summit Top of Rack Switches Servers Storage Summit Manage ment Top of Rack Switches Servers Storage Summit Manage ment Summit Summit Summit Summit Summit Top of Rack Switches Servers Storage Summit Manage ment Top of Rack Switches Servers Storage Summit Manage ment Summit Summit Summit Summit Spine Design Spine redundancy and capacity Low device latency and low Performance oversubscription rates I/O Diversity (10/25/40/50/100G) Ability to grow/scale as capacity is needed Collapsing of fault/broadcast domains (due to Layer 3 topologies) Deterministic failover and simpler troubleshooting , Lossless and Lossy traffic over single converged fabric Readily available operational expertise as well as a variety of traffic engineering capabilities

- 24. Collapsed (1-tier) Spine Summit Summit Spine Leaf Storage Summit Manage ment Storage Summit Manage ment Storage Summit Manage ment Storage Summit Manage ment Storage Summit Manage ment Storage Summit Manage ment Summit Summit 4 x 72 =248 10Gs 25/50GbE NICs Allows 25/50GbE from server to switch (Mellanox, Qlogic, Broadcom) Connectivity Connectors (SFP28, QSFP28), Optics and Cabling solutions backwards compatible Commercial switching silicon 25Gb signaling lanes make 25/50/100GbE devices possible

- 25. VXLAN - Overlay and underlay VXLAN Tunneling between sites Remove Network Entropy W L2 connectivity over L3 L3 Core VLANs XX-YY VLANs XX-YYVXLAN Gateway VXLAN Gateway VXLAN Gateway VXLAN Gateway L2 connections within IP overlay Unicast & multicast Allows flat DC design w/out boundaries Simple and elastic network Hypervisor / distributed Virtual Switch – other end of VxLAN tunnels IP overlay connections established between VxLAN end-points of a tenant Fully meshed unicast tunnels – for known L2 unicast traffic PIM signaled multicast tunnels for L2 BUM traffic

- 26. Fabric vs. Cisco legacy architecture Rack Blade servers Multitier network design E W S 80% Elastic Spine-Leaf Simpler Flatter Any-to-any network E W 80% A fundamental change in data flows To a Service Oriented Architecture MLAGClient – Server Architecture High Latency Complex High CapEx / OpEx Constrains Virtualization Inefficient STORAGE FC SAN

- 27. Converged Network: UCS A single system that encompasses: Network: Unified fabric Compute: Industry standard x86 Storage: Access options Virtualization optimized Uplinks

- 28. Petabyte Scale Data - Data Flow Architecture at Facebook Web Servers Scribe MidTier Filers Production Hive-Hadoop Cluster Oracle RAC Federated MySQL Scribe-Hadoop Cluster Adhoc Hive-Hadoop Cluster Hive replication