Notes on usability testing

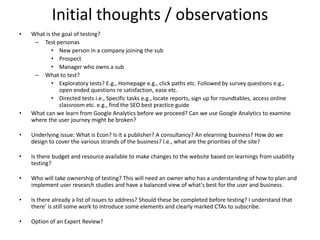

- 1. Initial thoughts / observations • What is the goal of testing? – Test personas • New person in a company joining the sub • Prospect • Manager who owns a sub – What to test? • Exploratory tests? E.g., Homepage e.g., click paths etc. Followed by survey questions e.g., open ended questions re satisfaction, ease etc. • Directed tests i.e., Specific tasks e.g., locate reports, sign up for roundtables, access online classroom etc. e.g., find the SEO best practice guide • What can we learn from Google Analytics before we proceed? Can we use Google Analytics to examine where the user journey might be broken? • Underlying issue: What is Econ? Is it a publisher? A consultancy? An elearning business? How do we design to cover the various strands of the business? I.e., what are the priorities of the site? • Is there budget and resource available to make changes to the website based on learnings from usability testing? • Who will take ownership of testing? This will need an owner who has a understanding of how to plan and implement user research studies and have a balanced view of what's best for the user and business. • Is there already a list of issues to address? Should these be completed before testing? I understand that there’ is still some work to introduce some elements and clearly marked CTAs to subscribe. • Option of an Expert Review?

- 2. Usability testing 101 1. Test participants complete typical tasks while observers watch, listen and take notes 2. Goal: 1. Identify usability problems 2. Determine participants satisfaction with the site

- 3. Usability test cycle Start the test Identify 6 – 8 users to test Observe test participants performing task Identify 2 – 3 easiest things to fix Make changes to the site

- 4. Steps for Usability Testing 1. Plan user tests 2. Conduct user tests 3. Analyse findings 4. Modify website 5. Retest

- 5. Test Methodology Test objectives Prepare a user profile Identify 6 – 9 users Design the test Include relevant tasks Prepare a script of letting the test participant know what to expect Consider how tasks can be measured and evaluated (not just questionnaires) Who will facilitate / observe? Who will analyse? Who will prepare the report and recommendations? Decide what to fix

- 6. Test Methodology – 2 Approaches • Test objectives • User profile • 6 – 9 users • 2 day process • Test design • Task list • Test environment • Observer • Evaluation measures • Report with exhaustive list of recommendations • Test objective • Test 3 – 4 users at a time • Recruit loosely • Quick debrief • Decide what to fix before next round of testing • > “Get it” testing is just what it sounds like: show them the site, and see if they get it—do they understand the purpose of the site, the value proposition, how it’s organized, how it works, and so on. • > Key task testing means asking the user to do something, then watching how well they do.

- 7. Testing Formats 1. Paper prototype 2. Competitors site 3. Live site i. Observation ii. Remote observation testing iii. Remote unmoderated testing

- 8. When to test • Test early and test often – Danger: Web Development teams don’t like usability testing so keep it simple for them. – Build it into operational activities – Once per month during development • Gives you what you need • Frees from deciding when to test • Short bursts • Identify problems before they get hard coded

- 9. Business Case for User Experience Design

- 10. How many users to test • Depends on the objective of the test – Are you trying to prove something? – Are you trying to identify all problems?

- 11. How many users to test • During development • Iterative Output: • Usability problems and suggested fixes • Highlight videos • Formative testing • Summative testing • Post development • Compare against competitors • Generate date to support marketing claims about usability Output: • Statistical measures of usability • Reports or white papers

- 12. How many users to test Source: Don’t Make Me Think, Steve Krug

- 13. • 6 – 8 users is a valid sample • Little ROI in testing more than 9 users

- 14. At least 20 users for quantitative studies Source: Jakob Nielsen

- 15. Where to test • Usability Lab (Beware: Hawthorne Effect)

- 16. Where to test • Informal moderated testing: – Moderator / Note taker – Participant – One to one – Tasks • Remote moderated testing: – Moderator / note taker • Web conference tool for screen sharing • Screen recorder • Speakerphone – Participant • High speed Internet access • Speakerphone / headset Lab not required Source: Don’t Make Me Think, Steve Krug

- 17. What to test • Understand requirements – What do users want to accomplish? – What does the company want to accomplish? • Determine the goals – What is the objective of the website? • Decide on the area of focus – Critical tasks: Test the tasks that have the biggest impact on the site

- 18. Task types 1. First impression - What is your impression of this home page or application? 2. Exploratory task - Open ended 3. Directed task- Specific / results oriented

- 19. First Impression / Exploratory

- 20. This is BAD (Krug, 2005)

- 21. This is Good (Krug, 2005)

- 22. Specific / Results Oriented Tasks

- 23. MicksGarage.com

- 24. Website Usability Process Workflow

- 25. Metrics • Task completion rate • Errors • Efficiency: Number of steps / clicks required to complete the task • Self reported metrics – Likert scale – A or B etc.

- 26. Some testing guidelines • Run a pilot test • Put participants at ease • Do the test yourself • Let participants know that they can abandon tasks • Don’t prompt participants • Record tests in as much detail as possible • Be sensitive to the fact that developers / business owners may be upset by the findings

- 29. CrazyEgg.com

- 32. Testing Tools - Morae • Prepare usability test – Morae Recorder • Conduct usability test – Morae Recorder – Unmoderated tests – “Think Aloud” Protocol – Survey design – Survey response • Collect and analyse results – Morae Manager – Gather survey responses – Review recordings for issues – Make notes on observed issues – Identify issues that developers or even a userinterface expert may have missed first hand

- 33. Wrap Up – Some Basic Principles • Design guidelines and usability test results inform how we should design • Usability is central to the business model • The usability process is intended to gain information about the user’s experience, not the experience of the development team / CEO / Marketing Manager etc. • You don’t need a usability lab to conduct usability tests

- 34. Don’t forget Google Analytics How do users browse your site? Use Flow Visualizations to track the path that a customer takes through your website. Find out time on site and with which content audience interacts the most. Identify how users enter your site and how they leave. The homepage that you assign may not be the homepage that user uses. Identify drop off points and reduce bounce rate. Have a clear idea of user tablet, desktop and mobile screen resolutions with the goal of optimising content to those dimensions.

- 35. Further reading • www.usability.gov • Morae tutorials: http://www.techsmith.com/tutorial-morae- current.html • Books: – Steve Krug, Rocket Science Made Easy – Steve Krug, Don’t Make Me Think – Eric Reiss, Usable Usability – Jakob Nielsen, Designing Web Usability, The Practice of Simplicity

- 36. A note on System Usability Scale

- 37. But… SUS does not diagnose usability problems: Users may encounter problems, even severe ones and still provide SUS scores that seem high Requirement to review recordings Build in extra questions to determine user satisfaction and thoughts

Notes de l'éditeur

- Testing only three or four users also makes it possible to test and debrief in the same day, so you can take advantage of what you’ve learned right away. Also, when you test more than four at a time, you usually end up with more notes than anyone has time to process—many of them about things that are really “nits,” which can actually make it harder to see the forest for the trees.

- Before you even begin designing your site, you should be testing comparable sites. They may be actual competitors, or they may be sites that are similar in style, organization, or features to what you have in mind. Use them yourself, then watch one or two other people use them and see what works and what doesn’t. Many people overlook this step, but it’s invaluable—like having someone build a working prototype for you for free.

- The key is to start testing early (it’s really never too early) and test often, at each phase of Web development. Before you even begin designing your site, you should be testing comparable sites. They may be actual competitors, or they may be sites that are similar in style, organization, or features to what you have in mind. Use them yourself, then watch one or two other people use them and see what works and what doesn’t. Many people overlook this step, but it’s invaluable—like having someone build a working prototype for you for free. If you’ve never conducted a test before testing comparable sites, it will give you a pressure-free chance to get the hang of it. It will also give you a chance to develop a thick skin. The first few times you test your own site, it’s hard not to take it personally when people don’t get it. Testing someone else’s site first will help you see how people react to sites and give you a chance to get used to it. Since the comparable sites are “live,” you can do two kinds of testing: “Get it” testing and key tasks. > “Get it” testing is just what it sounds like: show them the site, and see if they get it—do they understand the purpose of the site, the value proposition, how it’s organized, how it works, and so on. > Key task testing means asking the user to do something, then watching how well they do.

- User-experience studies help site owners to identify underperforming areas on their websites in order to make improvements to the user experience. This should have the effect of improving your business in various ways...

- Depends on the type of test. Summative versus Formative The purpose of testing isn’t to prove anything. You don’t need to fix all problems. The first three users are very likely to encounter nearly all of the most significant problems,2 and it’s much more important to do more rounds of testing than to wring everything you can out of each round. Testing only three users helps ensure that you will do another round soon.3 Also, since you will have fixed the problems you uncovered in the first round, in the next round it’s likely that all three users will uncover a new set of problems, since they won’t be getting stuck on the first set of problems.

- 1. The first three users are very likely to encounter nearly all of the most significant problems 2. and it’s much more important to do more rounds of testing than to wring everything you can out of each round. Testing only three users helps ensure that you will do another round soon. 3. Also, since you will have fixed the problems you uncovered in the first round, in the next round it’s likely that all three users will uncover a new set of problems, since they won’t be getting stuck on the first set of problems.

- In the beginning, though, usability testing was a very expensive proposition. You had to have a usability lab with an observation room behind a one-way mirror, and at least two video cameras so you could record the users’ reactions and the thing they were using. You had to recruit a lot of people so you could get results. Hawthorne Effect (observer effect): is a type of reactivity in which individuals modify an aspect of their behavior in response to their awareness of being observed. This can undermine the integrity of research, particularly the relationships between variables.

- What you want to do is sit back, zip your mouth shut, put your hands behind your back and watch someone unassisted as they go through your website. It's very hard and it is also extremely interesting. What is usability testing and how does it differ from other methods of research? It's a one or two day process with at least 4-8 participants per day. You want to take an hour per session and you have already gone through your site, remember you can't test everything on your site. You are going to have some predetermined tasks that you are going to lay out in advance and you have a test facilitator, hopefully someone that has experience in moderating, understanding how people are using the site and they're taking notes and sometimes it's videotaped, but not always, sometimes you have other people that are observing in the room. It's really one-on-one watching and learning. So you are taking a look at how someone is using your website, you're not actually showing them. How many of you have designed a website and sat with someone down in front of it and asked them what they thought? And they start to point around you and say no, no, no, don't go there! Oh! No, no, that's not active and you eventually take the mouse over and show them the cool stuff yourself. That is the antithesis of usability testing.

- • For all but the simplest and most informal tests, run a pilot test first. • Ensure participants are put at ease, and are fully informed of any taping or observation. Attend at least one test as a participant, to appreciate the stress that participants undergo. • Ensure that participants have the option to abandon any tasks which they are unable to complete. • Do not prompt participants unless it is clearly necessary to do so. • Record events in as much detail as possible— to the level of keystrokes and mouse clicks if necessary. • If there are observers, ensure that they do not interrupt in any way. Brief observers formally prior to the test. • Be sensitive to the fact that developers may be upset by what they observe or what you report.

- Crazy Egg is an online application that provides you with eye tracking tools such as Heat map, Scroll map, Overlay, and Confetti to track a website's operation. This helps you to understand your customers' interests so you can boost the profit from your website.

- SUS is the most widely used standard questionnaire for measuring the perception of usability. The SUS survey is a ‘quick and dirty’ benchmark of usability, created by John Brook in 1986 and has become an industry standard with references in over 600 publications (Sauro, 2011). Brook’s usability scale scores from zero to 100 but is not interpreted as a percentage score. Rather, the average benchmark score is 68 and anything below this is considered below average in usability terms. First developed in 1986, it has been used on software, websites, mobile phones and hardware etc. Some of the key benefits of SUS are: Provides a measure of a user’s view of the usability of a system Relatively easy to interpret Easy to communicate (because of its 0-100 scale) SUS allows developers to compare different beta websites to see which scores better. Reliable. SUS has been shown to be more reliable and detect differences at smaller sample sizes than home-grown questionnaires and other commercially available ones. Sample size and reliability are unrelated, so SUS can be used on very small sample sizes (as few as two users) and still generate reliable results. SUS also correlates highly with other questionnaire-based measurements of usability (called concurrent validity). At only 10 items, SUS may be quick to administer and score, but data from over 5000 users and almost 500 different studies suggests that SUS is far from dirty. Its versatility, brevity and wide-usage means that despite inevitable changes in technology, we can probably count on SUS being around for at least another 25 years.

- SUS was not intended to diagnose usability problems. In its original use, SUS was administered after a usability test where all user-sessions were recorded on videotape (VHS and Betamax). Low SUS scores indicated to the researchers that they needed to review the tape and identify problems encountered with the interface.