Image processing 1-lectures

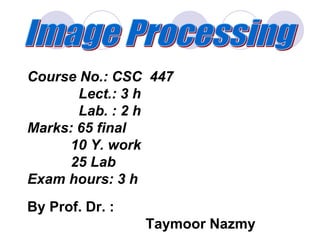

- 1. Course No.: CSC 447 Lect.: 3 h Lab. : 2 h Marks: 65 final 10 Y. work 25 Lab Exam hours: 3 h By Prof. Dr. : Taymoor Nazmy

- 2. Image processing applications, Picture modeling, Enhancement in spatial domain, Enhancement in frequency domain, Restoration, Segmentation, Scene analysis,

- 3. 1-Applications of image processing in remote sensing. 2- Image processing using NN. 3- Techniques of images retrieval. 4- Registration of medical images. 5- Image synthesize. .

- 4. 6- Image processing using pde. 7- Methods of texture analysis. 8- Reconstruction techniques. 9- Image processing using parallel algorithm. 10- Multiresolution processing 11- Open. 12- Open

- 5. The text book Digital Image Processing Using Matlab Rafael C. Gonzalez University of Tennessee Richard E. Woods

- 6. Title LAB. ASS. No. Read , view, and display an image file1- Zooming and Shrinking Images by Pixel Replication.2- Image Enhancement Using Intensity Transformations.3- •Histogram equalization & log. transformation.4- •Arithmetic operations & logic operation5-

- 7. Noise Generators & Noise Reduction.6- Spatial filtering (smoothing & sharpening)7- Two-Dimensional Fast Fourier Transfor.8- Lowpass Filtering9- Convert gray level image to color10- FFT, and Hough transform11-

- 11. Image Acquisition Preprocessing Segmentation Representation & Description Recognition & Interpretation Problem Domain Knowledge Base Result

- 15. Ultrasound Image Profiles of a fetus at 4 months, the face is about 4cm long Ultra sound image is another imaging modality The fetal arm with the major arteries (radial and ulnar) clearly delineated.

- 20. Section through Visible Human Male - head, including cerebellum, cerebral cortex, brainstem, nasal passages (from Head subset) This is an example of the “visible human project” sponsored by NIH

- 38. Image processing using Matlab tool box

- 40. Example of Matlab functions … Input / Output >>imread >>imwrite Image Display >>imshow Others >>axis >>colorbar >>colormap >>fft, fft2

- 41. Image Storage Formats To store an image, the image is represented in a two- dimensional matrix, in which each value corresponds to the data associated with one image pixel. When storing an image, information about each pixel, the value of each colour channel in each pixel, has to be stored. Additional information may be associated to the image as a whole, such as height and width, depth, or the name of the person who created the image. The most popular image storing formats include PostScript, GIF (Graphics Interchange Format), JPEG, TIFF (Tagged Image File Format), BMP (Bitmap), etc.

- 42. Chapter 2

- 43. Image Formation in the Eye 17100 15 x mmx 55.2 –Calculation of retinal image of an object •The eye has a sensor plane (100 million pixels)

- 44. The eyes have a USB2 data rate! 250,000 neurons in the optic nerve variable voltage output on EACH nerve 17.5 million neural samples per second 12.8 bits per sample 224 Mbps, per eye (a 1/2 G bps system!). Compression using lateral inhibition between the retinal neurons

- 45. CCD KAF-3200E from Kodak. (2184 x 1472 pixels, Pixel size 6.8 microns2) Image Sensor:Charge-Coupled Device (CCD) w Used for convert a continuous image into a digital image w Contains an array of light sensors wConverts photon into electric charges accumulated in each sensor unit

- 46. Typical IP System DIP Corrections and/or Enhancements A/D Scene Sensor A/D Media D/A D/A Display

- 48. An color image Fundamentals of Digital Images f(x,y) x y w An image: a multidimensional function of spatial coordinates. w Spatial coordinate: (x, y) for 2D case such as photograph, (x, y, z) for 3D case such as CT scan images (x, y, t) for movies. w The function f may represent intensity (for monochrome images) or color (for color images). Origin

- 49. Representing digital image )1,1(...)1,1()0,1( ............ )1,1(......)0,1( )1,0(...)1,0()0,0( ),( MNfNfNf Mff Mfff yxf Digital Image Picture Elements (Pixels) The value of f at (x,y) the intensity (brightness) of the image at that point.

- 50. Image Types Intensity image or monochrome image each pixel corresponds to light intensity normally represented in gray scale (gray level). 39871532 22132515 372669 28161010 Gray scale values

- 51. 39871532 22132515 372669 28161010 39656554 42475421 67965432 43567065 99876532 92438585 67969060 78567099 Image Types Color image or RGB image: each pixel contains a vector representing red, green and blue components. RGB components

- 52. Color image: For example, 24-bit image or 24 bits per pixel. There are 16,777,216 (224) possible colors. In other words, 8 bits for R(Red), 8 bits for G(Green), 8 bits for B(Blue). Since each value is in the range 0-255, this format supports 256 x 256 x 256.

- 53. Image Types Binary image or black and white image Each pixel contains one bit : 1 represent white 0 represents black 1111 1111 0000 0000 Binary data

- 54. Image Types Index image Each pixel contains index number pointing to a color in a color table 256 746 941 Index value Index No. Red component Green component Blue component 1 0.1 0.5 0.3 2 1.0 0.0 0.0 3 0.0 1.0 0.0 4 0.5 0.5 0.5 5 0.2 0.8 0.9 … … … … Color Table

- 55. Image Sampling Image sampling: discretize an image in the spatial domain Spatial resolution / image resolution: pixel size or number of pixels

- 56. Sampling & Quantization • The spatial and amplitude digitization of f(x,y) is called: • - image sampling when it refers to spatial coordinates (x,y) and • - gray-level quantization when it refers to the amplitude. The digitization process requires decisions about: values for N,M (where N x M: the image array) and the number of discrete gray levels allowed for each pixel.

- 58. Example for image sampling

- 59. Example for image resample

- 60. Effect of Quantization Levels 16 levels 8 levels 2 levels4 levels

- 62. Sampling & Quantization • Different versions (images) of the same object can be generated through: – Varying N, M numbers – Varying k (number of bits) – Varying both

- 63. Isopreference curves (in the NK plane) • Isopreference curves (in the NK plane) – Each point: image having values of N and k equal to the coordinates of this point – Points lying on an isopreference curve correspond to images of equal subjective quality.

- 64. Examples for images with different details

- 66. Sampling & Quantization • Conclusions: – Quality of images increases as N & k increase – Sometimes, for fixed N, the quality improved by decreasing k (increased contrast) – For images with large amounts of detail, few gray levels are needed

- 69. Some Basic Relationship Between Pixels (x,y) (x+1,y)(x-1,y) (x,y-1) (x,y+1) (x+1,y-1)(x-1,y-1) (x-1,y+1) (x+1,y+1) y x (0,0) Definition: f(x,y): digital image, Pixels: q, p S: Subset of pixels of f(x,y).

- 70. Neighbors of a Pixel p (x+1,y)(x-1,y) (x,y-1) (x,y+1) 4-neighbors of p: N4(p) = (x1,y) (x+1,y) (x,y1) (x,y+1) Neighborhood relation is used to tell adjacent pixels. It is useful for analyzing regions. Note: q N4(p) implies p N4(q) 4-neighborhood relation considers only vertical and horizontal neighbors.

- 71. p (x+1,y)(x-1,y) (x,y-1) (x,y+1) (x+1,y-1)(x-1,y-1) (x-1,y+1) (x+1,y+1) Neighbors of a Pixel (cont.) 8-neighbors of p: (x1,y1) (x,y1) (x+1,y1) (x1,y) (x+1,y) (x1,y+1) (x,y+1) (x+1,y+1) N8(p) = 8-neighborhood relation considers all neighbor pixels.

- 72. p (x+1,y-1)(x-1,y-1) (x-1,y+1) (x+1,y+1) Diagonal neighbors of p: ND(p)= (x1,y1) (x+1,y1) (x1,y+1) (x+1,y+1) Neighbors of a Pixel (cont.) Diagonal -neighborhood relation considers only diagonal neighbor pixels.

- 73. Connectivity • Two pixels are connected if: – They are neighbors (i.e. adjacent in some sense e.g. N4(p), N8(p), …) – Their gray levels satisfy a specified criterion of similarity (e.g. equality, …) • V is the set of gray-level values used to define adjacency (e.g. V = {1} for adjacency of pixels of value 1)

- 74. Adjacency • We consider three types of adjacency: – 4-adjacency: two pixels p and q with values from V are 4-adjacent if q is in the set N4(p) – 8-adjacency : p & q are 8- adjacent if q is in the set N8(p)

- 75. Adjacency • The third type of adjacency: • -m-adjacency: p & q with values from V are m- adjacent if: - q is in N4(p) or - q is in ND(p) and the set N4(p)N4(q) has no pixels with values from V Two image subsets S1 and S2 are adjacent if some pixel in S1 is adjacent to some pixel in S2 S1 S2

- 76. Adjacency • Mixed adjacency is a modification of 8- adjacency and is used to eliminate the multiple path connections that often arise when 8-adjacency is used. 100 010 110 100 010 110 100 010 110

- 77. Path A path from pixel p at (x,y) to pixel q at (s, t) is a sequence of distinct pixels: (x0, y0), (x1, y1), (x2, y2),…, (xn, yn) such that (x0,y0) = (x,y) and (xn, yn) = (s,t) and (xi, yi) is adjacent to (xi-1, yi-1), i = 1,…,n p q We can define type of path: 4-path, 8-path or m-path depending on type of adjacency.

- 78. Distance For pixel p, q, and z with coordinates (x,y), (s,t) and (u,v), D is a distance function or metric if w D (p, q) 0 (D (p, q) = 0 if and only if p = q) D (p, q) = D (q, p) D (p, z) D (p, q) + D (q, z) Euclidean distance: 22 )()(),( tysxqpDe +

- 79. Distance (cont.) D4 - distance (city-block distance) is defined as tysxqpD +),(4 1 2 10 1 2 1 2 2 2 2 2 2 Pixels with D4(p) = 1 is 4-neighbors of p. Pixels with D4 (p) = 2 is not 4-diagonl of p.

- 80. Distance (cont.) D8 - distance (chessboard distance) is defined as ),max(),(8 tysxqpD 1 2 10 1 2 1 2 2 2 2 2 2 Pixels with D8 (p) = 1 is 8-neighbors of p. 22 2 2 2 222 1 1 1 1

- 83. Our objectives of studying this topic: 1- To know how to read the transformations charts & histograms, 2- To evaluate the image quality, 3- To understand the principals of IP techniques. 4- To be able to develop IP algorithms, Most IP techniques depend on the concepts used in this chapter.

- 84. Spatial Domain Methods Procedures that operate directly on the aggregate of pixels composing an image A neighborhood about (x,y) is defined by using a square (or rectangular) subimage area centered at (x,y). )],([),( yxfTyxg

- 85. f(x,y) g(x,y)

- 86. Spatial Domain Methods When the neighborhood is 1 x 1 then g depends only on the value of f at (x,y) and T becomes a gray-level transformation (or mapping) function: s=T(r) Where: r denotes the pixel intensity before processing. s denotes the pixel intensity after processing.

- 87. Image Enhancement in the Spatial Domain Contrast Stretching: the values for r below m are expanded into wide range of s. Thresholding: produce binary image from gray level one.

- 88. Some Simple Intensity Transformations Linear: Negative, Identity Logarithmic: Log, Inverse Log Power-Law: nth power, nth root

- 89. 1-Image Negatives Are obtained by using the transformation function s=T(r). [0,L-1] the range of gray levels S= L-1-r

- 90. Image Enhancement in the Spatial Domain

- 91. 2-

- 93. Image Enhancement in the Spatial Domain

- 94. 3-

- 95. Image Enhancement in the Spatial Domain =c=1: identity

- 96. Image Enhancement in the Spatial Domain

- 97. Piecewise-Linear Transformation Functions - 1-Contrast Stretching transformation: The locations of (r1,s1) and (r2,s2) control the shape of the transformation function. If r1= s1 and r2= s2 the transformation is a linear function and produces no changes.

- 98. If r1=r2, s1=0 and s2=L-1, the transformation becomes a thresholding function that creates a binary image. Intermediate values of (r1,s1) and (r2,s2) produce various degrees of spread in the gray levels of the output image, thus affecting its contrast. Generally, r1≤r2 and s1≤s2 is assumed.

- 100. 2- Gray-Level Slicing Transformation To highlight a specific range of gray levels in an image (e.g. to enhance certain features). One way is to display a high value for all gray levels in the range of interest and a low value for all other gray levels (binary image).

- 101. Gray-Level Slicing The second approach is to brighten the desired range of gray levels but preserve the background and gray-level tonalities in the image:

- 102. Gray-Level Slicing

- 104. Histogram Processing •The histogram of a digital image with gray levels from 0 to L-1 is a discrete function where: »rk is the kth gray level –nk is the # pixels in the image with that gray level –n is the total number of pixels in the image k = 0, 1, 2, …, L-1 •Normalized histogram: p(rk) = nk / n •sum of all components = 1

- 105. Histogram Is Invariant Under Certain Image Operations Rotation, scaling, flip Rotate Clockwise Scale Flip

- 107. Histogram for an image with total n pixels

- 108. Histogram stretching: For an image f(x,y) with gray level r at x,y this value can be transformed to another gray level value s in the new image g(x,y), using the following equ.: g(x,y) = [(r- rmin) / ( rmax- rmin)] * [Max-Min] +Min Where: rmax is the largest gray level in the image f(x,y). rmin is the smallest gray level in the image f(x,y). Max, Min correspond to the maximum and minimum gray level values possible for the image g(x,y), (0-255). Histogram shrinking : g(x,y) = [( Max - Min ) / (rmax-rmin)] * [r – rmin] + Min Where: Max, Min correspond to the maximum and minimum desired gray level in the compressed (shrink) histogram.

- 109. Histogram Processing •Histograms are the basis for numerous spatial domain processing techniques, in addition it is providing useful image statistics. •Types of processing: Histogram equalization Histogram matching (specification) Local enhancement

- 111. To get a histogram equalization: -Ex: if the gray levels for an image is given by k = 0, 1, 2, 3 and number of pixels corresponding to these gray levels are 10,7,8,2 (histogram values), and the maximum k can be 7 (3bits/pixel). -1- The sum of these values are 10, 17 , 25, 27. -2- Normalize these values by dividing by the total number of pixels, 27, we get 10/27, 17/27, 25/27, 27/27. -3- Multiply these values by the maximum gray level value available , 7, and then round the result to the closest integer. So, we get the equalized values to be, 3,4, 6, 7 . -4- The final step is done by putting all pixels in the original image with k = 0, 1, 2, 3 to be with the new gray level distribution, 3, 4, 6 ,7.

- 116. .The global mean and variance are measured over an entire image and are useful for overall intensity ad contrast adjustments.The more powerful use of these parameters is in local enhancement. Let (x,y) be the coordinates of a pixel in an image, and let Sx,y denote a neighborhood (subimage) of specified size, centered at (x,y). The mean value of mSx,y of the pixels Sx,y can be computed using the equ.; Use of histogram statistics for image enhancement

- 117. Where rs,t is the gray level at coordinates (s,t) in the neighborhood, and p(rs,t) is the neighborhood normalized histogram component corresponding to that value of gray level. The local mean is a measure of average gray level in neighborhood Sx,y , and the variance is a measure of contrast in that neighborhood.

- 124. - = (absolute difference) Image subtraction

- 125. Image Enhancement in the Spatial Domain

- 126. Image Averaging A noisy image: ),(),(),( yxnyxfyxg + Averaging M different noisy images: M i i yxg M yxg 1 ),( 1 ),(

- 127. Image Averaging As M increases, the variability of the pixel values at each location decreases. This means that g(x,y) approaches f(x,y) as the number of noisy images used in the averaging process increases. Registering of the images is necessary to avoid blurring in the output image.

- 128. Image Enhancement in the Spatial Domain

- 129. Basic of spatial filtering Template, Window, and Mask Operation Question: How to compute the 3x3 average values at every pixels? 4 4 67 6 1 9 2 2 2 7 5 2 26 4 4 5 212 1 3 3 4 2 9 5 7 7 Solution: Imagine that we have a 3x3 window that can be placed everywhere on the image Masking Window

- 130. 4.3 Template, Window, and Mask Operation (cont.) Step 1: Move the window to the first location where we want to compute the average value and then select only pixels inside the window. 4 4 67 6 1 9 2 2 2 7 5 2 26 4 4 5 212 1 3 3 4 2 9 5 7 7 Step 2: Compute the average value Sub image p Original image 4 1 9 2 2 3 2 9 7 Output image Step 3: Place the result at the pixel in the output image Step 4: Move the window to the next location and go to Step 2

- 131. Template, Window, and Mask Operation (cont.) The 3x3 averaging method is one example of the mask operation or one of the Spatial filters . w The mask operation has the corresponding mask (sometimes called window or template). wThe mask of size m x n contains coefficients to be multiplied w with pixel values. w(-1,1) w(-1,1) w(1,1) w(0,0) w(1,0) w(0,1) w(-1,-1) w(0,-1) w(1,-1) Mask coefficients 1 1 1 1 1 1 1 1 1 9 1 Example : moving averaging The mask of the 3x3 moving average filter has all coefficients = 1/9

- 132. s

- 133. Template, Window, and Mask Operation (cont.) The mask operation at each point is performed by: 1. Move the reference point (center) of mask to the location to be computed 2. Compute sum of products between mask coefficients and pixels in subimage under the mask. Ex: For a 3*3 mask the response R of the linear filter at a point (x,y) in the image is: R = w(-1,-1) f( x-1,y-1)+ w(-1,0) f(x-1,y) + w(-1,1) f(x-1,y+1) + w(0,-1) f(x,y-1) + w(0,0) f(x,y) + w(0,1) f(x,y+1) + w(1,-1) f(x+1,y-1)+ w(1,0) f(x+1,y) + w(1,1)f(x+1,y+1)

- 134. Template, Window, and Mask Operation (cont.) Examples of the masks Sobel operators 0 1 1 0 0 1 -1 -2 -1 -2 -1 1 0 2 0 -1 0 1 1 1 1 1 1 1 1 1 1 9 1 3x3 moving average filter 3x3 sharpening filter -1 -1 -1 8 -1 -1 -1 -1 -1

- 139. a)Original image, b,c,d,e, f ,smoothed images with square averaging filter Masks of size N=3,5,9,15 c d e f a b

- 140. A low pass filter (kernal) would blur the original •All positive values in a filter indicate a blurring operation •The larger the kernal, the higher the blur •The flatter the kernal, the higher the blur 1 1 1 1 1 1 1 1 1 1 1 1 1 2 1 1 1 1 1 1 1 1 4 1 1 1 1 Less blur 1/9 1/121/10

- 141. Nonlinear smoothing filters (Order-Statistics filters) They are nonlinear spatial filters whose response is based on ordering the pixels contained in the image area encompassed by the filter, and then replacing the value of the center pixel with the value determine by the ranking (ordering) result. Median , max, and min filters are examples of order filters.However, median filter is the most useful order-statistics filter.

- 144. Sharpening Filters To highlight fine detail or to enhance blurred detail. smoothing ~ integration => summation sharpening ~ differentiation => differences Categories of sharpening filters: Derivative operators - High-boost filtering

- 145. Derivatives

- 146. Derivatives • First derivative • for one dimensional digital function is defined in term of differences such as: • Second derivative f x f (x +1) f (x) 2 f x2 f (x +1) + f (x 1) 2 f (x)

- 148. Digital Function Derivatives First derivative: 0 in constant gray segments Non-zero at the onset of steps or ramps Non-zero along ramps Second derivative: 0 in constant gray segments Non-zero at the onset and end of steps or ramps 0 along ramps of constant slope.

- 149. Observations 1st order derivatives produce thicker edges in an image, 2nd order derivatives have stronger response to fine detail, 1st order derivatives have stronger response to a gray level step, 2nd order derivatives produce a double response at step changes in gray level, 2nd order derivatives have stronger response to a line than to a step and to a point than to a line.

- 151. Roberts operators

- 152. Perwitt First derivative Filter mask of size 3*3 in x and y directions

- 153. Sobel first derivative Filter mask of size 3*3 in x and y directions

- 158. 2-D, 2nd Order Derivatives for Image Enhancement Laplacian (linear operator): Discrete version: 2 f 2 f x2 + 2 f y2 2 f 2 x2 f (x + 1, y) + f (x 1, y) 2 f (x, y) 2 f 2 y2 f (x, y + 1) + f (x, y 1) 2 f (x, y)

- 160. Laplacian Spatial Filtering • The sum of the coefficients is 0, indicating that when the filter is passing over regions of almost stable gray levels, the output of the mask is 0 or very small. • Some scaling and/or clipping is involved (to compensate for possible negative gray levels after filtering).

- 161. Laplacian Digital implementation: Two definitions of Laplacian: one is the negative of the other Accordingly, to recover background features: : if the center coefficient of the Laplacian mask is negative II: if the center coefficient of the Laplacian mask is positive. 2 f [f (x +1,y)+ f (x1,y)+ f (x,y +1)+ f (x,y1)]4 f (x,y) g(x,y) { f ( x,y)+2 f ( x,y)( II ) f ( x,y)2 f ( x,y)( I )

- 163. Simplification Filter and recover original part in one step: g(x,y) f(x,y)[f(x+1,y)+ f(x1,y)+ f(x,y+1)+ f(x,y1)]+4f(x,y) g(x,y) 5f (x,y)[ f (x +1,y)+ f (x 1,y)+ f (x,y +1)+ f (x,y 1)]

- 164. Image Enhancement in the Spatial Domain

- 165. A high pass filter (kernal) would sharpen the original • Negative values in a filter surrounding a center positive value indicate a sharpening operation • The larger the negative values, the higher the sharpening • “Noise” is also sharpened when sharpening an image More sharpening 0 -1 0 -1 5 -1 0 -1 0 1 -2 1 -2 5 -2 1 -2 1

- 166. Highpass filtered image = Original – lowpass filtered image. where fs(x,y) denotes the sharpened image obtained by unsharp mask process. High-boost filter is generalization of unsharp masking, If A is an multiplication factor, then high-boost filter is given by: fhb(x,y) = (A-1) f(x,y)+ fs(x,y) = (A-1) · original + original – lowpass = (A-1) · original + highpass fs(x,y) f (x,y) f (x,y)

- 167. ))(,(2),( ))(,(2),( { IyxfyxAf IIyxfyxAfhbf + I: if the center coefficient of the Laplacian mask is negative II: if the center coefficient of the Laplacian mask is positive. fhb(x,y) = (A-1) f(x,y)+ fs(x,y) If we use Laplacian as an image sharper, then one Can replace fs(x,y) by g(x,y)(for Laplacian)

- 168. High-boost Filtering A=1 : standard highpass result A>1 : the high-boost image looks more like the original with a degree of edge enhancement, depending on the value of A. -1 -1 -1 A+8 -1 -1 -1 -1 -1 -1 0 0 A+4 -1 -1 0 -1 0

- 169. Image Enhancement in the Spatial Domain

- 170. Steps in processing an image “noise cleanup” ex. median filtering Determining content of image (ex. histograms) Image Sharpening and Blurrring (ex. Convolution, Unsharp Masking, etc) Adjust Brightness/Contrast/Tonal Range (ex. histogram manipulations) Capture (digitize) image Output (print/displa y) image