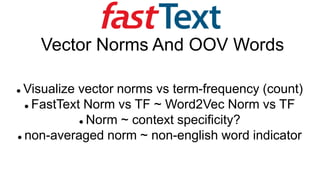

FastText Vector Norms And OOV Words

•Télécharger en tant que PPTX, PDF•

0 j'aime•207 vues

The document discusses analyzing word embeddings from FastText and Word2Vec. It finds that FastText's standard vector norm shows clusters of common words when plotted against term frequency, while the no-ngram norm is similarly shaped to Word2Vec norms. The no-ngram norm also correlates with word significance, representing how distinctive a word is based on its context. The document also explores using norms to identify hypo-hypernyms and splitting combined words using a Bayesian approach.

Signaler

Partager

Signaler

Partager

Recommandé

Contenu connexe

Tendances

Tendances (8)

lesson 3 presentation of data and frequency distribution

lesson 3 presentation of data and frequency distribution

2.1 frequency distributions, histograms, and related topics

2.1 frequency distributions, histograms, and related topics

Similaire à FastText Vector Norms And OOV Words

Keyphrase - W15-3607Apurba Paul - 2015 - Identification and Classification of Emotional Key Phras...

Apurba Paul - 2015 - Identification and Classification of Emotional Key Phras...Association for Computational Linguistics

Similaire à FastText Vector Norms And OOV Words (20)

Development and validation of a vocabulary size test of multiword expressions

Development and validation of a vocabulary size test of multiword expressions

MT SUMMIT2013 poster boaster slides.Language-independent Model for Machine Tr...

MT SUMMIT2013 poster boaster slides.Language-independent Model for Machine Tr...

ACL-WMT Poster.A Description of Tunable Machine Translation Evaluation System...

ACL-WMT Poster.A Description of Tunable Machine Translation Evaluation System...

20- Tabular & Graphical Presentation of data(UG2017-18).ppt

20- Tabular & Graphical Presentation of data(UG2017-18).ppt

20- Tabular & Graphical Presentation of data(UG2017-18).ppt

20- Tabular & Graphical Presentation of data(UG2017-18).ppt

RANLP2013: DutchSemCor, in Quest of the Ideal Sense Tagged Corpus

RANLP2013: DutchSemCor, in Quest of the Ideal Sense Tagged Corpus

RANLP 2013: DutchSemcor in quest of the ideal corpus

RANLP 2013: DutchSemcor in quest of the ideal corpus

Apurba Paul - 2015 - Identification and Classification of Emotional Key Phras...

Apurba Paul - 2015 - Identification and Classification of Emotional Key Phras...

Tabulation of Data, Frequency Distribution, Contingency table

Tabulation of Data, Frequency Distribution, Contingency table

Plus de Vaclav Kosar

Plus de Vaclav Kosar (6)

Conversation with-search-engines (Ren et al. 2020)

Conversation with-search-engines (Ren et al. 2020)

Simulation of Soft Photon Calorimeter @ 2011 JINR, Dubna Student Practice

Simulation of Soft Photon Calorimeter @ 2011 JINR, Dubna Student Practice

Spline: Data Lineage For Spark Structured Streaming

Spline: Data Lineage For Spark Structured Streaming

Dernier

Dernier (20)

Module for Grade 9 for Asynchronous/Distance learning

Module for Grade 9 for Asynchronous/Distance learning

High Profile 🔝 8250077686 📞 Call Girls Service in GTB Nagar🍑

High Profile 🔝 8250077686 📞 Call Girls Service in GTB Nagar🍑

chemical bonding Essentials of Physical Chemistry2.pdf

chemical bonding Essentials of Physical Chemistry2.pdf

Human & Veterinary Respiratory Physilogy_DR.E.Muralinath_Associate Professor....

Human & Veterinary Respiratory Physilogy_DR.E.Muralinath_Associate Professor....

Pulmonary drug delivery system M.pharm -2nd sem P'ceutics

Pulmonary drug delivery system M.pharm -2nd sem P'ceutics

FAIRSpectra - Enabling the FAIRification of Spectroscopy and Spectrometry

FAIRSpectra - Enabling the FAIRification of Spectroscopy and Spectrometry

Sector 62, Noida Call girls :8448380779 Model Escorts | 100% verified

Sector 62, Noida Call girls :8448380779 Model Escorts | 100% verified

Kochi ❤CALL GIRL 84099*07087 ❤CALL GIRLS IN Kochi ESCORT SERVICE❤CALL GIRL

Kochi ❤CALL GIRL 84099*07087 ❤CALL GIRLS IN Kochi ESCORT SERVICE❤CALL GIRL

Call Girls Ahmedabad +917728919243 call me Independent Escort Service

Call Girls Ahmedabad +917728919243 call me Independent Escort Service

Biogenic Sulfur Gases as Biosignatures on Temperate Sub-Neptune Waterworlds

Biogenic Sulfur Gases as Biosignatures on Temperate Sub-Neptune Waterworlds

Vip profile Call Girls In Lonavala 9748763073 For Genuine Sex Service At Just...

Vip profile Call Girls In Lonavala 9748763073 For Genuine Sex Service At Just...

development of diagnostic enzyme assay to detect leuser virus

development of diagnostic enzyme assay to detect leuser virus

FastText Vector Norms And OOV Words

- 1. Vector Norms And OOV Words Visualize vector norms vs term-frequency (count) FastText Norm vs TF ~ Word2Vec Norm vs TF Norm ~ context specificity? non-averaged norm ~ non-english word indicator

- 2. Vaclav Kosar studied physics develops software dabbles in ML works for “Time is Ltd” startup (internal comms analytics) @

- 3. Word embeddings intro Word is represented by an 100 dim vector Representation is trained or statistical Word similarity via cosine similarity (angle) Vector norm is length of a vector

- 4. Word2vec norms (Schakel 2015) (Schakel 2015) Measuring Word Significance using Distributed Representations of Words Word significance ~ context distinctiveness Document term frequency vs global TF (tf-idf) word2vec norms ~ word significance (within training corpus and frequency band)

- 5. Word2vec norms (Schakel 2015) Corpus mainly about Q Mechanics Longest vectors in the high-tf bands: “inflation” (v=4.64, tf= 571) “sitter” (v= 3.81, tf= 1490) “de Sitter” “holes” (v= 3.41, tf=2465) “black holes” Word vector length versus term frequency of all words in the hep-th vocabulary.

- 6. ngram is subsequence within word fastText(word) = (vword + Σg ∈ ngrams(word) vg)/ (1 + |ngrams(word)|) If the word is not present in the dictionary (OOV) only n-grams vectors are used. To study OOV words removed asymmetry by only utilizing word’s n-grams vectors 10k most common english words to contrast them with FT vocabulary including dataset artifacts (non-words)

- 7. FastText: Standard Vector Norm Standard 4 clusters with unknown meaning

- 8. FastText: No-Ngrams Norm Only whole- word vectors used Norm ~ word significance Shape similar to word2vec

- 9. FastText: NG_Norm Only ngram vectors used No ngram count averaging common words in narrow norm-band

- 10. FastText: Hypo and Hypernyms 67 hypo-hypernyms norms pairs Disregarding TF no_ngram_norm most predictive Filtering to max 30% away TF to 7 samples standard norm most predictive hyper hypo standard_norm no_ngram_norm ng_norm count month January -25.4 11.5 25.4 55.6 month February -41.7 12.5 26.8 44.6 month March 19.6 7.9 19.6 62.8 month April 23.0 9.9 23.0 60.6 month May 110.6 6.4 14.7 104.7 month June 60.5 9.2 21.8 57.3 color red 103.4 -2.5 4.6 -15.4 color black -5.6 -3.8 -5.6 23.1 color pink 47.1 1.4 7.5 -119.8 color yellow -28.8 1.5 -0.3 -111.3 color cyan 61.0 17.2 22.4 -197.7 color violet -33.9 9.6 -5.4 -193.6 … … … … … … average 2.6 8.1 7.7 -105.1 counts 43.9 77.3 65.2 counts selected 43.9 77.3 65.2

- 11. FastText: Vocab vs Common NG_Norm distributions Bayes approach

- 12. FastText: Splitting To English Words Algo: Join 2 common words Generate all possible splits Decide looking at norms 48% accuracy word1 word2 norm1 norm2 prob1 prob2 prob i nflationlithium 0 4.20137 0 0.000397 0 in flationlithium 0 4.40944 0 0.000519 0 inf lationlithium 1.88772 3.86235 0.010414 0.000741 7.721472E-06 infl ationlithium 2.29234 4.04391 0.053977 0.000428 2.308942E-05 infla tionlithium 2.24394 4.74456 0.052467 0 0 inflat ionlithium 2.55929 3.45802 0.048715 0.002442 0.0001189513 inflati onlithium 3.10228 3.55187 0.007973 0.001767 1.408828E-05 inflatio nlithium 3.34667 3.26616 0.003907 0.003159 1.234263E-05 inflation lithium 2.87083 2.73886 0.017853 0.035389 0.0006318213 inflationl ithium 3.36933 2.35156 0.002887 0.053333 0.0001539945 inflationli thium 3.73344 2.21766 0.001283 0.052467 6.730259E-05 inflationlit hium 4.16165 1.66477 9.6E-05 0.004324 4.139165E-07 inflationlith ium 4.40217 1.59184 0.000519 0.002212 1.147982E-06 inflationlithi um 4.71089 0 0 0 0 inflationlithiu m 4.91263 0 0.000213 0 0

- 13. Conclusion Visualized FastText vector norms vs term- frequency Standard Norm vs Term-Frequency plot interesting clustering of common words No-NGram Norm vs TF is shaped as Word2Vec Norm vs TF The word significance ~ No-N-Gram Norm.