Contenu connexe

Similaire à Advanced pipelining

Similaire à Advanced pipelining (20)

Advanced pipelining

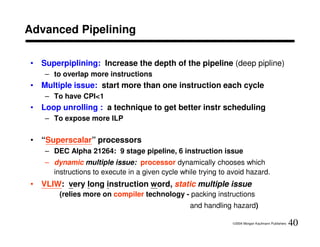

- 1. Advanced Pipelining

• Superpiplining: Increase the depth of the pipeline (deep pipline)

– to overlap more instructions

• Multiple issue: start more than one instruction each cycle

– To have CPI<1

• Loop unrolling : a technique to get better instr scheduling

– To expose more ILP

• “Superscalar” processors

– DEC Alpha 21264: 9 stage pipeline, 6 instruction issue

– dynamic multiple issue: processor dynamically chooses which

instructions to execute in a given cycle while trying to avoid hazard.

• VLIW: very long instruction word, static multiple issue

(relies more on compiler technology - packing instructions

and handling hazard)

©2004 Morgan Kaufmann Publishers 40

- 2. Advanced Pipelining

• Static multiple issue

– compiler decides multiple issue before execution

• Dynamic multiple issue

– processor decides multiple issue during execution

• Problems of multiple issue

– How to package instructions into issue slots

– How to deal with data and control hazard

• Speculation – the compiler or processor guesses the outcome

of an instruction to remove it as a dependence in executing

other instructions

©2004 Morgan Kaufmann Publishers 41

- 3. Static Multiple Issue

• Issue packet

– the set of instructions that issue together in a clock cycle

• SMI concept

– regard an issue packet as one large instruction with multiple operations

– Very Long Instruction Word (VLIW) or Explicitly Parallel Instruction

Computer (EPIC) by intel IA-64

• Assume two instrs may be issued per clock cycle:

– 1 for an integer ALU op or branch

– 1 for a load or store

©2004 Morgan Kaufmann Publishers 42

- 5. Static Multiple Issues

• Extra resources (issuing 2 instrs per cycle)

– Another 32bits from instruction memory

– need extra ports in the register file

– Another ALU handling address calculation for data transfer

• Without these extra resources ⇒ structural hazards

• More ambitious compiler or h/w scheduling technique

– loads have a latency of 1 clock cycle in simple five-stage pipeline

• In two-issue pipeline, the next two inst cannot use the load result

without stalling.

– ALU that has no use latency in simple five-stage pipeline

• Become 1-instr use latency (the result cannot be used in paired instr)

©2004 Morgan Kaufmann Publishers 44

- 6. Example: Multiple-issue Code Scheduling

• How would this loop be scheduled on a two-issue pipeline for MIPS?

Reorder the instrs to avoid as many pipeline stalls as possible.

Loop: lw $t0, 0($s1)

addu $t0, $t0, $s2

sw $t0, 0($s1)

addi $s1, $s1, -4

bne $s1, $zero, Loop

• Ans: 4 clocks per loop iteration

CPI = 4/5= 0.8

ALU or branch inst. Data transfer inst. Clock cycle

Loop: lw $t0, 0($s1) 1

addi $s1, $s1, -4 2

addu $t0, $t0, $s2 3

bne $s1, $zero,Loop sw $t0, 4($s1) 4

©2004 Morgan Kaufmann Publishers 45

- 7. Example: Loop Unrolling for Multiple-issue Pipelines

Loop unrolling:

• multiple copies of the loop body are made &

instrs from different iterations are scheduled together

• Register renaming - remove antidependence (name dependence)

Ex. Assume the loop index is a multiple of four

ALU or branch inst. Data transfer Clock

Loop: lw $t0, 0($s1) inst. cycle

addu $t0, $t0, $s2 Loop: addi $s1,$s1, -16 lw $t0, 0($s1) 1

sw $t0, 0($s1) lw $t1,12($s1) 2

addi $s1, $s1, -4 addu $t0, $t0, $s2 lw $t2,8($s1) 3

bne $s1, $zero, Loop 4

addu $t1, $t1, $s2 lw $t3,4($s1)

addu $t2, $t2, $s2 sw $t0,16($s1) 5

• Ans:

addu $t3, $t3, $s2 sw $t1,12($s1) 6

– 8/4 clocks per iteration

sw $t2,8($s1) 7

– CPI = 8/14=0.57 bne $s1, $zero, Loop 8

sw $t3,4($s1)

©2004 Morgan Kaufmann Publishers 46

- 8. The BIG Picture

• Both pipelining and multiple-issue execution

increase peak instr throughput.

• Longer pipelines and wider multiple-issue put even

more pressure on the compiler to deliver on the

performance potential of the hardware.

• Hardware designers must ensure correct execution

of all instr sequences.

• Compiler writers must understand the pipeline to

generate the appropriate code and then to achieve

best performance.

©2004 Morgan Kaufmann Publishers 47

- 9. Dynamic Pipeline Scheduling

• SuperScalar processor – the pipeline is divided into three

major units

1. an instr fetch and decode unit:

« fetches instrs, decodes them, & sends each instr to related

functional units

2. functional units (FUs):

« Reservation station: each FU has buffers

« Once the buffer contains all its operands and the functional

unit is ready to execute, the result is calculated.

3. a commit unit:

« decide when to put the result into the reg file or memory

©2004 Morgan Kaufmann Publishers 48

- 10. The Dynamically scheduled Pipeline

Instruction fetch In-order issue

and decode unit

Reservation Reservation … Reservation Reser vation

station station station station

Floating Load/ Out-of-order

Functional Integer Integer … Out-of-order execute

units point Store execution

In-order commit

Commit

unit

©2004 Morgan Kaufmann Publishers 49

- 11. The Dynamically scheduled Pipeline

• Motivations for dynamic scheduling:

– Not all stalls are predictable (e.g., cache miss). (Ch7)

– If dynamic branch prediction is used (it cannot know the

execution order of instruction at compile time)

– Pipeline latency and issue width change from one

implementation to another.

Dynamic scheduling allows to hide the multiple

versions of hardware implementations of the same

instruction set.

Old code will get benefit of a new implementation

without the need for recompilation.

©2004 Morgan Kaufmann Publishers 50