Alleviating Manual Feature Engineering for Part-of-Speech Tagging of Twitter Microposts using Distributed Word Representations

•

3 j'aime•1,285 vues

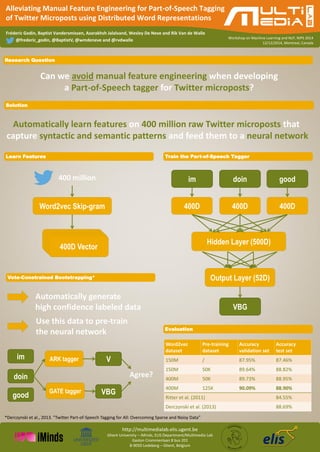

Paper presented at NIPS 2014 workshop on modern machine learning and natural language processing. Many algorithms for natural language processing rely on manual feature engineering. In this paper, we show that we can achieve state-of-the-art performance for part-of-speech tagging of Twitter microposts by solely relying on automatically inferred word embeddings as features and a neural network. By pre-training the neural network with large amounts of automatically labeled Twitter microposts to initialize the weights, we achieve a state-of-the-art accuracy of 88.9% when tagging Twitter microposts with Penn Treebank tags.

Signaler

Partager

Signaler

Partager

Télécharger pour lire hors ligne

Recommandé

Recommandé

Contenu connexe

Similaire à Alleviating Manual Feature Engineering for Part-of-Speech Tagging of Twitter Microposts using Distributed Word Representations

Similaire à Alleviating Manual Feature Engineering for Part-of-Speech Tagging of Twitter Microposts using Distributed Word Representations (20)

Next Generation IoT Architectures_Hans Salomonsson

Next Generation IoT Architectures_Hans Salomonsson

Plus de fgodin

Plus de fgodin (7)

Explaining Character-Aware Neural Networks for Word-Level Prediction: Do They...

Explaining Character-Aware Neural Networks for Word-Level Prediction: Do They...

Skip, residual and densely connected RNN architectures

Skip, residual and densely connected RNN architectures

Improving Language Modeling using Densely Connected Recurrent Neural Networks

Improving Language Modeling using Densely Connected Recurrent Neural Networks

Named Entity Recognition for Twitter Microposts (only) using Distributed Word...

Named Entity Recognition for Twitter Microposts (only) using Distributed Word...

Using Topic Models for Twitter hashtag recommendation

Using Topic Models for Twitter hashtag recommendation

Dernier

Dernier (20)

"Boost Your Digital Presence: Partner with a Leading SEO Agency"

"Boost Your Digital Presence: Partner with a Leading SEO Agency"

best call girls in Hyderabad Finest Escorts Service 📞 9352988975 📞 Available ...

best call girls in Hyderabad Finest Escorts Service 📞 9352988975 📞 Available ...

Vip Firozabad Phone 8250092165 Escorts Service At 6k To 30k Along With Ac Room

Vip Firozabad Phone 8250092165 Escorts Service At 6k To 30k Along With Ac Room

Russian Escort Abu Dhabi 0503464457 Abu DHabi Escorts

Russian Escort Abu Dhabi 0503464457 Abu DHabi Escorts

Power point inglese - educazione civica di Nuria Iuzzolino

Power point inglese - educazione civica di Nuria Iuzzolino

Story Board.pptxrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrr

Story Board.pptxrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrrr

20240508 QFM014 Elixir Reading List April 2024.pdf

20240508 QFM014 Elixir Reading List April 2024.pdf

20240509 QFM015 Engineering Leadership Reading List April 2024.pdf

20240509 QFM015 Engineering Leadership Reading List April 2024.pdf

Indian Escort in Abu DHabi 0508644382 Abu Dhabi Escorts

Indian Escort in Abu DHabi 0508644382 Abu Dhabi Escorts

Russian Call girls in Abu Dhabi 0508644382 Abu Dhabi Call girls

Russian Call girls in Abu Dhabi 0508644382 Abu Dhabi Call girls

20240507 QFM013 Machine Intelligence Reading List April 2024.pdf

20240507 QFM013 Machine Intelligence Reading List April 2024.pdf

20240510 QFM016 Irresponsible AI Reading List April 2024.pdf

20240510 QFM016 Irresponsible AI Reading List April 2024.pdf

Alleviating Manual Feature Engineering for Part-of-Speech Tagging of Twitter Microposts using Distributed Word Representations

- 1. http://multimedialab.elis.ugent.be Ghent University – iMinds, ELIS Department/Multimedia Lab Gaston Crommenlaan 8 bus 201 B-9050 Ledeberg – Ghent, Belgium Fréderic Godin, Baptist Vandersmissen, Azarakhsh Jalalvand, Wesley De Neve and Rik Van de Walle Workshop on Machine Learning and NLP, NIPS 2014 Alleviating Manual Feature Engineering for Part-of-Speech Tagging of Twitter Microposts using Distributed Word Representations 12/12/2014, Montreal, Canada Research Question Vote-Constrained Bootstrapping* Can we avoid manual feature engineering when developing a Part-of-Speech tagger for Twitter microposts? @frederic_godin, @BaptistV, @wmdeneve and @rvdwalle Solution Automatically learn features on 400 million raw Twitter microposts that capture syntactic and semantic patterns and feed them to a neural network Learn Features Train the Part-of-Speech Tagger 400 million Word2vec Skip-gram 400D vector 400D 400D vector 400D 400D 444000000DDD v vVeeecccttotoorr r Hidden Layer (500D) Output Layer (52D) im doin good VBG Evaluation ARK tagger GATE tagger im doin good VBG V Agree? Automatically generate high confidence labeled data Use this data to pre-train the neural network *Derczynski et al., 2013. "Twitter Part-of-Speech Tagging for All: Overcoming Sparse and Noisy Data" Word2vec dataset Pre-training dataset Accuracy validation set Accuracy test set 150M / 87.95% 87.46% 150M 50K 89.64% 88.82% 400M 50K 89.73% 88.95% 400M 125K 90.09% 88.90% Ritter et al. (2011) 84.55% Derczynski et al. (2013) 88.69%