Introduction to data quality management (BVB KVB FDM-KompetenzPool, 2021)

•Télécharger en tant que PPTX, PDF•

0 j'aime•6 vues

Presented at BVB KVB FDM-KompetenzPool, 2021

Signaler

Partager

Signaler

Partager

Recommandé

Recommandé

Contenu connexe

Similaire à Introduction to data quality management (BVB KVB FDM-KompetenzPool, 2021)

Similaire à Introduction to data quality management (BVB KVB FDM-KompetenzPool, 2021) (20)

Presentation ADEQUATe Project: Workshop on Quality Assessment and Improvement...

Presentation ADEQUATe Project: Workshop on Quality Assessment and Improvement...

Better Hackathon 2020 - Fraunhofer IAIS - Semantic geo-clustering with SANSA

Better Hackathon 2020 - Fraunhofer IAIS - Semantic geo-clustering with SANSA

Linked Open Data Principles, benefits of LOD for sustainable development

Linked Open Data Principles, benefits of LOD for sustainable development

Implementar una estrategia eficiente de gobierno y seguridad del dato con la ...

Implementar una estrategia eficiente de gobierno y seguridad del dato con la ...

¿En qué se parece el Gobierno del Dato a un parque de atracciones?

¿En qué se parece el Gobierno del Dato a un parque de atracciones?

Marvin Platform – Potencializando equipes de Machine Learning

Marvin Platform – Potencializando equipes de Machine Learning

Technical and organizational obstacles when introducing Data in Motion to you...

Technical and organizational obstacles when introducing Data in Motion to you...

Advanced Project Data Analytics for Improved Project Delivery

Advanced Project Data Analytics for Improved Project Delivery

Plus de Péter Király

Plus de Péter Király (20)

Requirements of DARIAH community for a Dataverse repository (SSHOC 2020)

Requirements of DARIAH community for a Dataverse repository (SSHOC 2020)

Empirical evaluation of library catalogues (SWIB 2019)

Empirical evaluation of library catalogues (SWIB 2019)

GRO.data - Dataverse in Göttingen (Dataverse Europe 2020)

GRO.data - Dataverse in Göttingen (Dataverse Europe 2020)

Incubating Göttingen Cultural Analytics Alliance (SUB 2021)

Incubating Göttingen Cultural Analytics Alliance (SUB 2021)

Continuous quality assessment for MARC21 catalogues (MINI ELAG 2021)

Continuous quality assessment for MARC21 catalogues (MINI ELAG 2021)

Validating JSON, XML and CSV data with SHACL-like constraints (DINI-KIM 2022)

Validating JSON, XML and CSV data with SHACL-like constraints (DINI-KIM 2022)

FRBR a book history perspective (Bibliodata WG 2022)

FRBR a book history perspective (Bibliodata WG 2022)

GRO.data - Dataverse in Göttingen (Magdeburg Coffee Lecture, 2022)

GRO.data - Dataverse in Göttingen (Magdeburg Coffee Lecture, 2022)

Understanding, extracting and enhancing catalogue data (CE Book history works...

Understanding, extracting and enhancing catalogue data (CE Book history works...

Measuring cultural heritage metadata quality (Semantics 2017)

Measuring cultural heritage metadata quality (Semantics 2017)

Measuring Metadata Quality in Europeana (ADOCHS 2017)

Measuring Metadata Quality in Europeana (ADOCHS 2017)

Evaluating Data Quality in Europeana: Metrics for Multilinguality (MTSR 2018)

Evaluating Data Quality in Europeana: Metrics for Multilinguality (MTSR 2018)

Dernier

Dernier (20)

Call Girls in Sarai Kale Khan Delhi 💯 Call Us 🔝9205541914 🔝( Delhi) Escorts S...

Call Girls in Sarai Kale Khan Delhi 💯 Call Us 🔝9205541914 🔝( Delhi) Escorts S...

Chintamani Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore ...

Chintamani Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore ...

Generative AI on Enterprise Cloud with NiFi and Milvus

Generative AI on Enterprise Cloud with NiFi and Milvus

Delhi Call Girls Punjabi Bagh 9711199171 ☎✔👌✔ Whatsapp Hard And Sexy Vip Call

Delhi Call Girls Punjabi Bagh 9711199171 ☎✔👌✔ Whatsapp Hard And Sexy Vip Call

Al Barsha Escorts $#$ O565212860 $#$ Escort Service In Al Barsha

Al Barsha Escorts $#$ O565212860 $#$ Escort Service In Al Barsha

Call Girls Hsr Layout Just Call 👗 7737669865 👗 Top Class Call Girl Service Ba...

Call Girls Hsr Layout Just Call 👗 7737669865 👗 Top Class Call Girl Service Ba...

Best VIP Call Girls Noida Sector 39 Call Me: 8448380779

Best VIP Call Girls Noida Sector 39 Call Me: 8448380779

Determinants of health, dimensions of health, positive health and spectrum of...

Determinants of health, dimensions of health, positive health and spectrum of...

BPAC WITH UFSBI GENERAL PRESENTATION 18_05_2017-1.pptx

BPAC WITH UFSBI GENERAL PRESENTATION 18_05_2017-1.pptx

Introduction to data quality management (BVB KVB FDM-KompetenzPool, 2021)

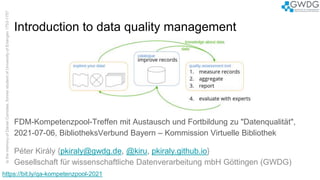

- 1. Introduction to data quality management FDM-Kompetenzpool-Treffen mit Austausch und Fortbildung zu "Datenqualität", 2021-07-06, BibliotheksVerbund Bayern – Kommission Virtuelle Bibliothek Péter Király {pkiraly@gwdg.de, @kiru, pkiraly.github.io} Gesellschaft für wissenschaftliche Datenverarbeitung mbH Göttingen (GWDG) https://bit.ly/qa-kompetenzpool-2021

- 3. top 20 patterns, ‘date’ field, MoMa collection Harald Klinke (LMU München) https://twitter.com/HxxxKxxx/status/1066805548866289664 3 https://bit.ly/qa-kompetenzpool-2021

- 4. Generic title and bad thumbnail 4 more examples in Report and Recommendations from the Task Force on Metadata Quality (2015) https://bit.ly/qa-kompetenzpool-2021

- 5. 1. measure records 2. aggregate 3. report 4. evaluate with experts catalogue improve records data quality management lifecycle 5 quality assessment explore your data! https://bit.ly/qa-kompetenzpool-2021 data knowledge about data remediation plan 1 2 3

- 6. quality and ‘fitness for purpose’ ’We know it when we see it, but conveying the full bundle of assumptions and experience that allow us to identify it is a different matter.’ 6 https://bit.ly/qa-kompetenzpool-2021

- 7. metadata quality 7 purpose: to access content no metadata no access to data no data usage more explanation: Data on the Web Best Practices W3C Working Draft, https://www.w3.org/TR/dwbp/ bad metadata https://bit.ly/qa-kompetenzpool-2021

- 8. the problem statement – improved 8 there are “good” and “bad” metadata records we would like to achieve metrics like this: functional requirements good acceptable bad https://bit.ly/qa-kompetenzpool-2021

- 9. general metrics ★ completeness: number of metadata elements filled out ★ accuracy: data correspond to the resource that is being described ★ consistency: values compliant to what is defined by the metadata scheme ★ objectiveness: values describe the resource in an unbiased way ★ appropriateness: values are facilitating the deployment of search ★ correctness: syntactically and grammatically correct language Bruce and Hillman (2004); Ochoa and Duval (2009); Palavitsinis (2014) 9 https://bit.ly/qa-kompetenzpool-2021

- 10. linked data dimensions and metrics accessibility ★ Availability ★ Licensing ★ Interlinking ★ Security ★ Performance intrinsic ★ Syntactic validity ★ Semantic accuracy ★ Consistency ★ Conciseness ★ Completeness contextual ★ Relevancy ★ Trustworthiness ★ Understandability ★ Timeliness representational ★ Representational conciseness ★ Interoperability ★ Interpretability ★ Versatility Stvilia et al. (2007); Zaveri et al. (2015) 10 https://bit.ly/qa-kompetenzpool-2021

- 11. The good metrics are ★ clear ★ realistic ★ discriminating ★ measurable ★ universality http://fairmetrics.org – https://github.com/FAIRMetrics/Metrics/blob/master/ALL.pdf tool: F-UJI (FAIRsFAIR Research Data Object Assessment Service) https://www.fairsfair.eu/f-uji-automated-fair-data-assessment-tool https://github.com/pangaea-data-publisher/fuji FAIR metrics 11 https://bit.ly/qa-kompetenzpool-2021

- 12. F1 – Identifier Uniqueness What is being measured? Whether there is a scheme to uniquely identify the digital resource. How do we measure it? An identifier scheme is valid if and only if it is described in a repository that can register and present such identifier schemes (e.g. fairsharing.org). 12 https://bit.ly/qa-kompetenzpool-2021

- 13. RDFUnit, SHACL and ShEx ★ Linked Data is based on Open World assumption ★ No “record”, no clear boundaries ★ RDF Data Shapes: reinventing the schema ★ ShEx (Shape Expressions, https://shex.io) and SHACL (Shapes Constraint Language, https://www.w3.org/TR/shacl/) ★ Finding individual data issues 13 https://bit.ly/qa-kompetenzpool-2021

- 14. Core constraints Cardinality minCount, maxCount Types of values class, datatype, nodeKind Shapes node, property, in, hasValue Range of values minInclusive, maxInclusive, minExclusive, maxExclusive String based minLength, maxLength, pattern, stem, uniqueLang Logical constraints not, and, or, xone Closed shapes closed, ignoredProperties Property pair constraints equals, disjoint, lessThan, lessThanOrEquals Non-validating constraints name, value, defaultValue Qualified shapes qualifiedValueShape, qualifiedMinCount, qualifiedMaxCount 14

- 15. The Quartz guide to bad data (2015) ★ by Christopher Groskopf ★ guide for data journalist about how to recognize data issues ★ practical guide, not an academic paper ★ take-away messages: ○ be sceptic about the data ○ check it with exploratory data analysis ○ check it early, check it often ★ https://github.com/Quartz/bad-data-guide, https://qz.com/572338/the-quartz- guide-to-bad-data/ 15 https://bit.ly/qa-kompetenzpool-2021

- 16. where and who should solve issues? ★ Issues that your source should solve ○ Values are missing ○ Zeros replace missing values ★ Issues that you should solve ○ Sample is biased ○ Data has been manually edited ★ Issues a third-party expert should help you solve ○ Author is untrustworthy ○ Collection process is opaque ★ Issues a programmer should help you solve ○ Data are aggregated to the wrong categories or geographies ○ Data are in scanned documents https://bit.ly/qa-kompetenzpool-2021

- 19. hypothesis 19 by measuring structural elements we can approximate metadata record quality ≃ metadata smell https://bit.ly/qa-kompetenzpool-2021

- 20. organisational proposal 20 Europeana* Data Quality Committee ★ Analysing/revising metadata schema ★ Functional requirement analysis ★ Problem catalog ★ Multilinguality * elsewhere: DDB, British Library, Digital Library Federation, DPLA ... https://bit.ly/qa-kompetenzpool-2021

- 21. technical proposal 21 “Metadata Quality Assessment Framework” a generic tool for measuring metadata quality ★ adaptable to different metadata schemes ★ scalable (to Big Data) ★ understandable reports for data curators ★ open source https://bit.ly/qa-kompetenzpool-2021

- 22. What to measure? 22 ★Structural and semantic features Completeness, cardinality, uniqueness, length, dictionary entry, data type conformance, multilinguality (generic metrics) ★Functional requirement analysis / Discovery scenarios Requirements of the most important functions ★Problem catalog Known metadata problems https://bit.ly/qa-kompetenzpool-2021

- 23. demos 23 https://bit.ly/qa-kompetenzpool-2021 ★Europeana metadata quality dashboard https://rnd- 2.eanadev.org/europeana-qa/ ★Union catalogue of BibliotheksVerbund Bayern http://134.76.17.95/bvb/

- 24. data quality management lifecycle 24 https://bit.ly/qa-kompetenzpool-2021 1. measure records 2. aggregate 3. report 4. evaluate with experts catalogue improve records quality assessment explore your data! data knowledge about data remediation plan 1 2 3

- 25. Let’s cooperate! ★ https://github.com/pkiraly/metadata-qa-api ★ https://github.com/pkiraly/metadata-qa-marc ★ http://pkiraly.github.io ★ https://twitter.com/kiru ★ peter.kiraly@gwdg.de ★ Király (2019) Measuring metadata quality. 10.13140/RG.2.2.33177.77920 ★ Király–Brase (2021) Qualitätsmanagement. In Praxishandbuch Forschungsdatenmanagement, 10.1515/9783110657807-020 25 https://bit.ly/qa-kompetenzpool-2021