Scale-Out Block Storage

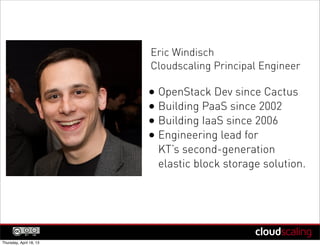

- 1. Eric Windisch Cloudscaling Principal Engineer • OpenStack Dev since Cactus • Building PaaS since 2002 • Building IaaS since 2006 • Engineering lead for KT’s second-generation elastic block storage solution. Thursday, April 18, 13

- 2. Scale Out Block Storage Thursday, April 18, 13

- 3. Amazon’s Architecture (or what we can infer) Thursday, April 18, 13

- 4. Control-plane + Storage EBS Control-plane EBS Storage EC2 4 Thursday, April 18, 13

- 5. Distributed Storage “EBS is a distributed, replicated block data store that is optimized for consistency and low latency read and write access from EC2 instances.” 5 Thursday, April 18, 13

- 6. Brewer’s Theorem: “It is impossible for a distributed system to simultaneously provide all three of the following guarantees.” consistency availability C A P partition tolerance 6 Thursday, April 18, 13

- 7. Brewer’s Theorem: ial “It is impossible for a distributed system to simultaneously nt provide all three of sse following guarantees.” E the consistency availability C A = reliability P partition = reliability tolerance 7 Thursday, April 18, 13

- 8. Brewer’s Theorem: ial “It is impossible for a distributed system to simultaneously nt provide all three of sse following guarantees.” E the consistency availability C A = reliability To have consistency, P EBS gives up partition = reliability reliability. tolerance 8 Thursday, April 18, 13

- 9. Snapshots “Amazon EBS also provides the ability to create point-in-time snapshots of volumes... These snapshots... protect data for long-term durability.” For reliability, use snapshots. 9 Thursday, April 18, 13

- 10. Cloud #FAIL Thursday, April 18, 13

- 11. Real-life failures 11 Thursday, April 18, 13

- 12. Real-life failures “ ” 12 Thursday, April 18, 13

- 13. Real-life failures “ FF T U S ” 13 Thursday, April 18, 13

- 14. Service Provider Failures - 100% Hardware rarely fails, operators fail, software fails Type Year Why Duration Switch 2005 Bug 2 hrs SAN 2007 Ops Err 24 hrs NAS 2008 Ops Err 8 hrs SAN 2011 Bug 72 hrs Datacenter 2012 Bug+Ops 2 hrs (names withheld to protect the guilty) 14 Thursday, April 18, 13

- 15. What is Scale-out? A B A B C D N A B Scale-up - Make boxes Scale-out - Make moar bigger (usually an HA pair) boxes 15 Thursday, April 18, 13

- 16. Scaling out is a mindset Scaling up is like treating your servers as pets bowzer.company.com web001.company.com Servers *are* cattle 16 Thursday, April 18, 13

- 17. Big failure domains vs. small Would you rather have the whole cloud down or just a small bit for a short period of time? Still a scale-up pattern ... wouldn’t you rather scale-out? 17 Thursday, April 18, 13

- 18. Cinder Architecture Thursday, April 18, 13

- 19. Cinder: Different than EBS? Cinder Control-plane Storage Compute 19 Thursday, April 18, 13

- 20. Cinder Control Plane Control Plane Sto rag e 20 Thursday, April 18, 13

- 21. Not just a control-plane Cinder Control-plane Storage Compute 21 Thursday, April 18, 13

- 22. Storage Plugins emc coraid sheepdog huawei glusterfs solidfire netapp lvm storewize nexenta nfs windows san rbd (ceph) xiv xenapi scality zadara 18 different architectures 22 Thursday, April 18, 13

- 23. Storage Plugin Choices really are: NAS DFS BLOCK 23 Thursday, April 18, 13

- 24. Breaking the Cinder Control Plane Thursday, April 18, 13

- 25. Scale-up, Scale-up (I hope nobody does this) XOne-really- big-server One-really- big-server X really-big really-big storage system storage system 25 Thursday, April 18, 13

- 26. Diagram: Multi-backend pattern Cin der vol- man ager one big cinder Stor control-plane age Several really-big storage systems 26 Thursday, April 18, 13

- 27. Diagram: Multi-backend pattern Many really-big Many really-big control-plane nodes storage systems 27 Thursday, April 18, 13

- 28. Scale-out, Scale-out Complex. Split-brain problems. Cinder doesn’t natively support this. To fix this, lets talk about the storage backend. 28 Thursday, April 18, 13

- 29. Breaking the Cinder Storage Backend This section needs a lot of work yet... Thursday, April 18, 13

- 30. Scale-out, Scale-out Complex. Split-brain problems. Cinder doesn’t natively support this. 30 Thursday, April 18, 13

- 31. Scale-out, then scale-up Transport Failed Storage Failed server Failure 31 Backend Thursday, April 18, 13

- 32. “HA” Replication Cinder Control-plane HA PAIR 32 Thursday, April 18, 13

- 33. Scale-out, Scale-out, HA Really Complex. Where is my data? More split-brain problems. Cinder still doesn’t support it. 33 Thursday, April 18, 13

- 34. Networking complexity Imagine this was 4 or 6 nodes in the cluster 34 Thursday, April 18, 13

- 35. Brewer’s Theorem: his se s t system to simultaneously “It is impossible for a distributed lo HA provide all three of the following guarantees.” consistency availability C A P in-consistent partition block storage? tolerance REALLY SCARY. 35 Thursday, April 18, 13

- 37. We deploy Cinder like Nova. vo lume ^ API Scheduler Compute HTTP Proxy API Scheduler ^ me Compute volu 37 Thursday, April 18, 13

- 38. Brokerless Messaging With ZeroMQ Distributed MessagingFailure 0MQ Avoiding RabbitMQ’s Single Point Of with Nova-Compute Nova-Compute Single Point Of Failure RabbitMQ Broker Nova-Scheduler Nova-API Nova-Scheduler Nova-API RabbitMQ vs. ZeroMQ (Brokered) Centralized Broker (Peer To Peer) Distributed Broker (peer to peer) 35 Thursday, October 18, 12 38 Thursday, April 18, 13

- 39. Scale-together Simple. Deterministic. Won’t lose all data together. Can lose leaf-node data, but snapshots hedge against this. 39 Thursday, April 18, 13

- 40. Brewer’s Theorem: “It is impossible for a distributed system to simultaneously provide all three of the following guarantees.” consistency availability C A P partition tolerance 40 Thursday, April 18, 13

- 41. Brewer’s Theorem: “It is impossible for a distributed system to simultaneously provide all three of the following guarantees.” consistency availability C A We don’t need P availability with snapshots & partition partition tolerance tolerance 41 Thursday, April 18, 13

- 42. Snapshots save us. Swift / S3 Snapshots 42 Thursday, April 18, 13

- 43. QA http://engineering.cloudscaling.com/portland13/ Eric Windisch Principal Engineer - OpenStack, Cloudscaling @ewindisch CCA - NoDerivs 3.0 Unported License - Usage OK, no modifications, full attribution* * All unlicensed or borrowed works retain their original licenses Thursday, April 18, 13