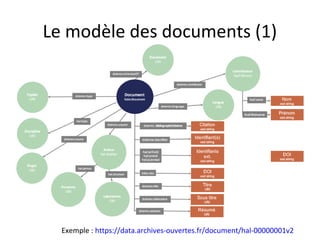

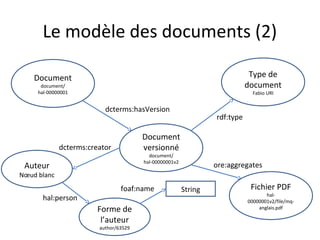

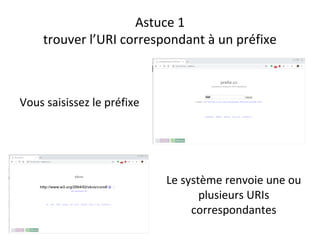

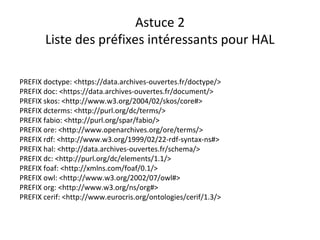

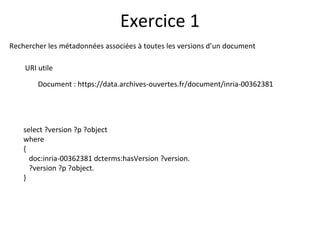

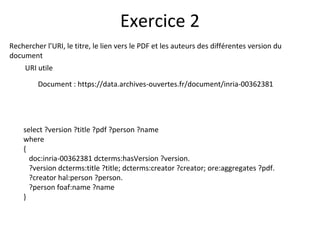

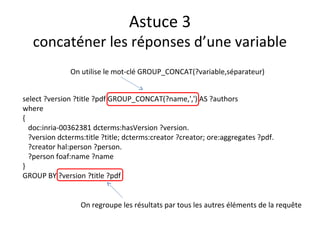

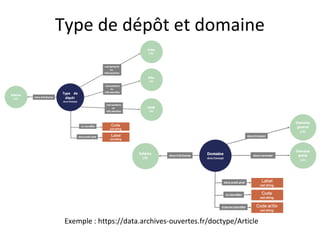

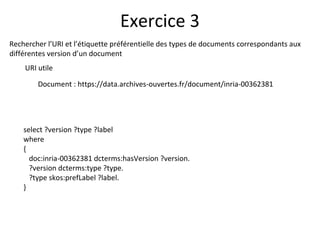

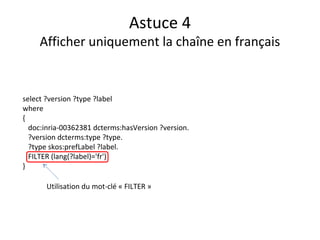

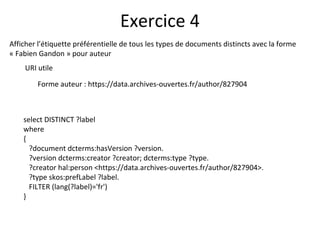

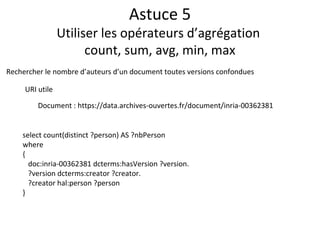

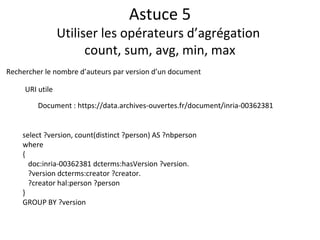

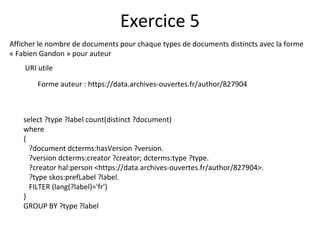

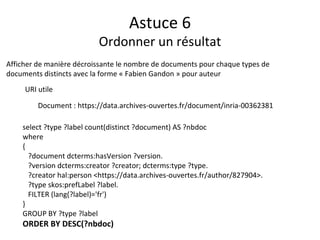

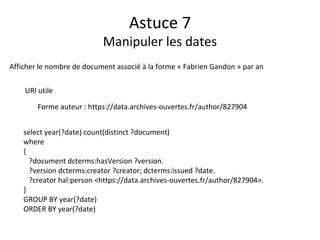

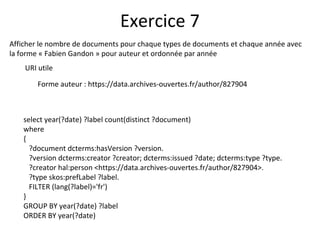

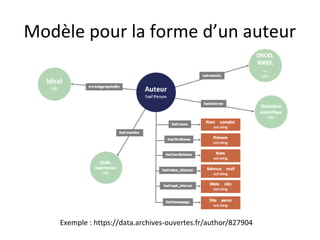

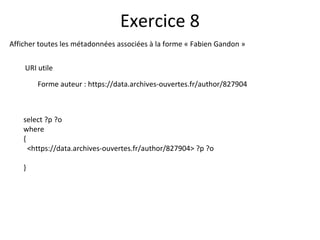

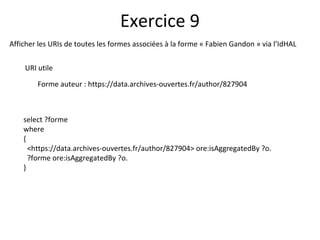

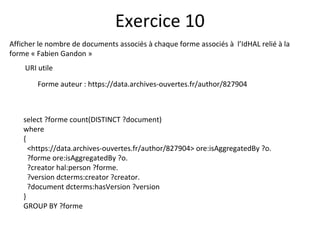

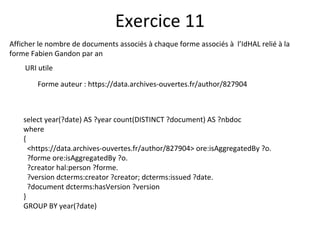

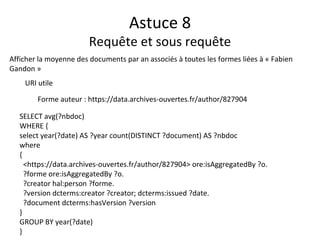

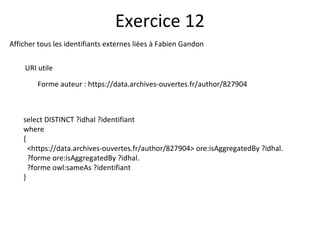

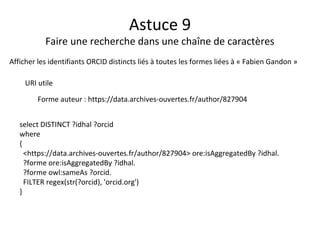

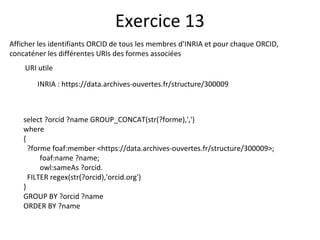

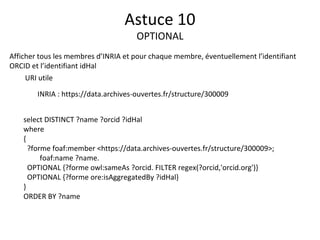

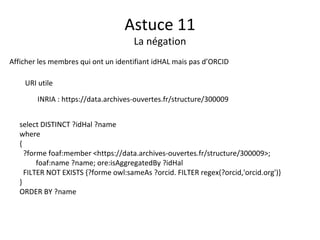

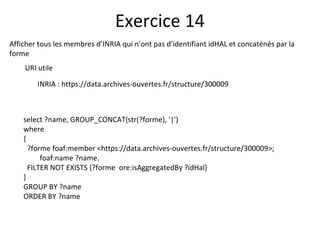

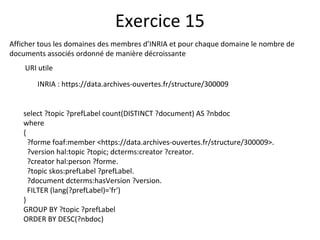

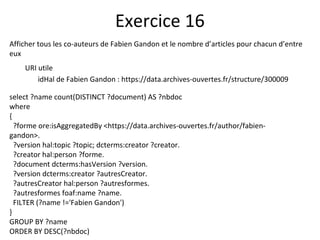

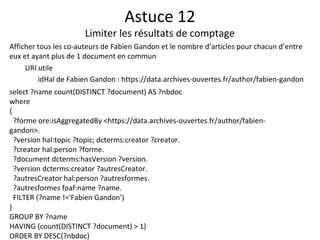

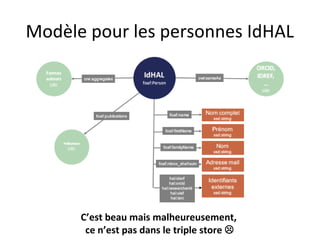

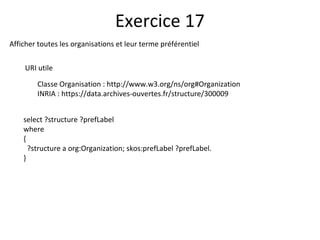

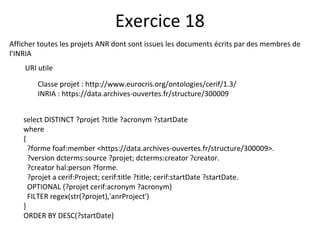

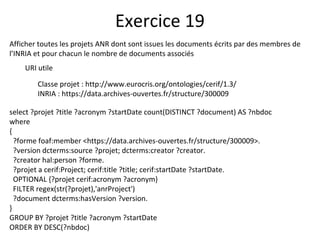

Le document présente un endpoint SPARQL pour accéder aux données de HAL, permettant de rechercher et de manipuler les métadonnées des documents académiques. Il fournit également des exemples de requêtes et des astuces pour extraire des informations telles que les versions de documents, les auteurs, et les types de publications. En outre, il aborde des techniques d'agrégation et de filtrage pour analyser les données liées aux recherches académiques.