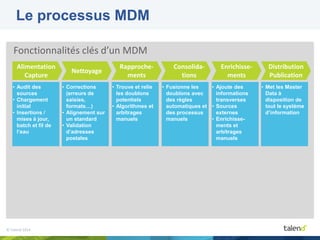

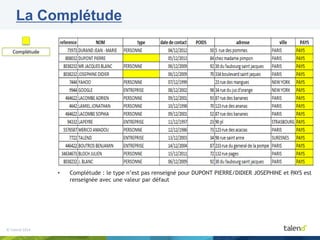

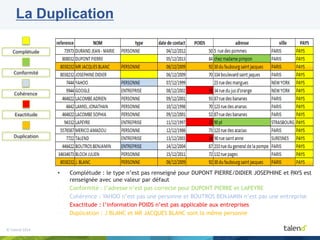

Talend est une plateforme d'intégration de données fondée en 2006, offrant des solutions pour la qualité des données, la gouvernance, le MDM et le Big Data, avec un modèle open core et une communauté dynamique. Le document aborde les fonctionnalités clés du MDM, le processus de gestion des données, ainsi que des études de cas démontrant l'impact positif d'une gouvernance efficace des données, notamment pour des entreprises comme Veolia. Il met également en avant l'importance du MDM pour tirer parti des opportunités offertes par le Big Data.